Mapping controversial narratives related to the 2022 Russian Invasion of Ukraine in Polish-language social media

Facilitators

Kamila Koronska, Maria Lompe, Richard RogersContents

1. Summary of Key Findings

The goal of this project is to map the most common controversial narratives in the Polish social media sphere regarding the war in Ukraine. We also attempt to discover whether the actors involved in the dissemination of these narratives are ordinary participants in online culture wars, for whom the conflict is another divisive topic to discuss, malicious actors and instigators supporting foreign governments, or other participants. The analysis is conducted for three platforms: Facebook, Twitter and Telegram. For each platform, the most frequent and persuasive narratives are identified and mapped using elements of OSINT and digital methods, including platform engagement, textual analysis (such as word trees) and network analysis (of actors and shared URLs).

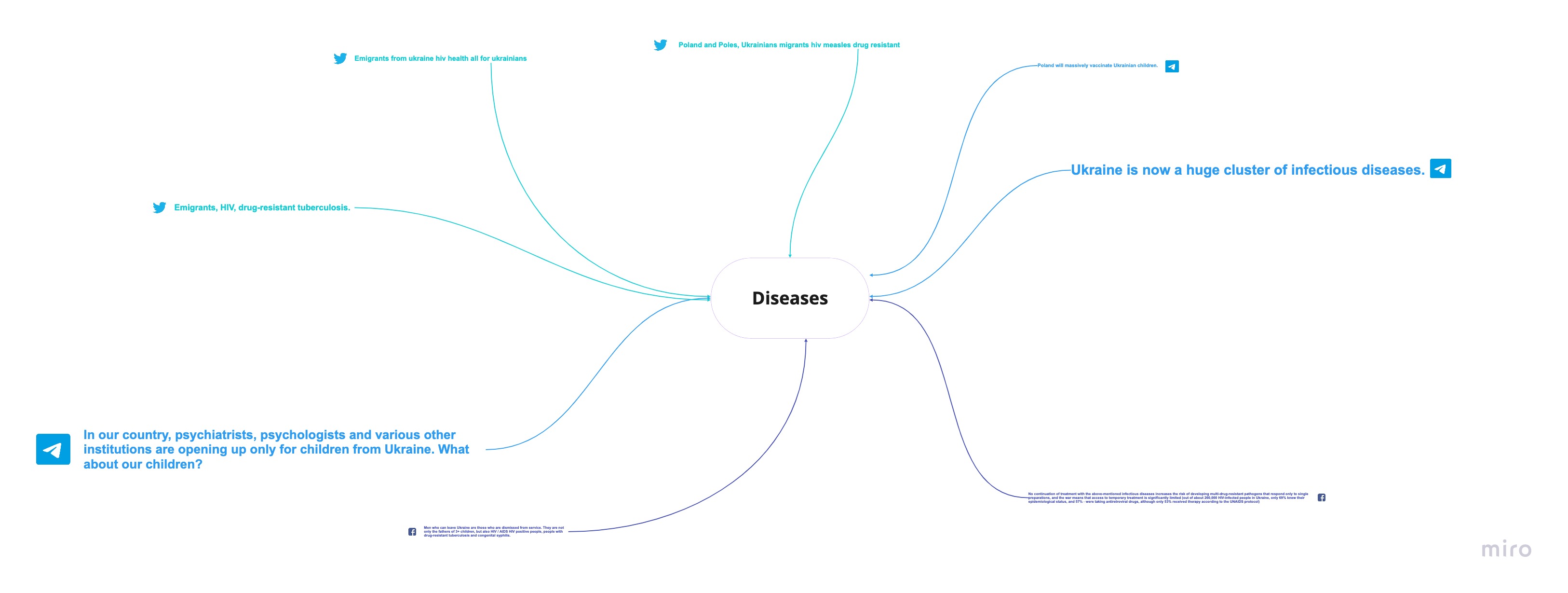

Controversial narratives that we found could be divided into seven thematic areas, in order of engagement: refugees, historical massacres, national and economic insecurity, disease, corruption, coronavirus and conspiracy theories . Most of these narratives are repeated across all platforms, though certain platforms emphasise one or another. Conspiracy narratives (concerning, for instance, biolabs, Covid-19, and the authenticity of the war itself) are present mainly on Telegram and largely absent on Facebook. Offensive language concerning incoming refugees is found on Telegram. Facebook is predominantly used as a platform to alert the public about financial and security consequences if Poland continues to engage in helping Ukraine, but also to remind Poles of historical massacres that were orchestrated by the UPA (former Ukrainian nationalist paramilitary group). On Twitter, most persuasive narratives concern alleged diseases spread by Ukrainian refugees. Apart from providing instability, the incoming Ukrainians are said to bring along with them a series of conditions and diseases. They are Covid-19 carriers. They have measles, drug-resistant tuberculosis and HIV. There is also a post that discusses how Polio has not been eradicated in Ukraine.

Participants who actively debate the anti-Ukrainian content can be divided into a few distinctive groups. (1) Superspreaders who share copious links to external content, encouraging people to check out Youtube videos, articles or posts from other social-media groups or platforms, thus injecting controversial narratives or trying to establish new destructive behaviours. Some of the users in that group share posts in foreign languages (German, English, Russian) and in incorrect Polish. They often link to similar sources. (2) Users who post frequently and solely on controversial narratives.Their active participation on these platforms does not generate much engagement, and usually the response to the content they share is minimal. (3) Internet personalities with significant reach, such as right-wing politicians, populists, conspiracy theorists, amateur historians, novelists, professional news outlets, satirical news sites and more, who are participants of internet culture wars. Most of them are politicians and lawyers associated with the right-wing political movements (most often from the Konfederacja party), or public figures turned Youtubers who have channels dedicated to “natural” medicine, divination, magic, or coronascepticism. (4) Users who post “organic pushback” who engage in the divisive conversations on social media about the invasion in order to point out false claims and alert people not to fall for misleading content or disinformation.2. Introduction

According to tallies by the UNHCR at the end of June 2022, since the beginning of the Russian invasion of Ukraine more than 5.2 million refugees have fled Ukraine and relocated across Europe, with over a million residing in neighbouring Poland (2022). Poland has played an important role in facilitating rescue corridors for Ukrainians, at one point welcoming almost half of the total number of refugees. At the onset of the war, Polish media started to report an alarming rise in controversial narratives shared on social media platforms concerning Ukranians (Wirtualne Media, Onet.pl, RMF24, Konkret24). Among these are calls for reducing aid to the Ukrainian refugees, evoking historical, economic or other arguments that seek to undermine public sentiment and eagerness to help. The stakes can be high. There have been extreme situations that threaten the most vulnerable such as when there was an orchestrated buy out of necessities and queues at gas stations. Since the war in Ukraine started, over 2 million Ukrainian refugees have fled to Poland seeking shelter. Journalists have reported a rise in controversial narratives found online concerning the motives of Ukrainian refugees as well as reactions to them. Our objective is to map these narratives and attempt to find out actors who spread them in the Polish social media sphere.3. Research Questions

The research has as its aim to answer the following questions: Question 1: What are the most recurring controversial narratives related to the war in Ukraine in the Polish-language on Facebook, Instagram, Twitter and Telegram? Question 2: Which narratives are most prominent overall and per platform? Question 3: What actors are involved in spreading problematic content? Can we find evidence of foreign disinformation? Question 4: Is there evidence of content moderation on the platforms (through the absence of particular content), performed either by the platforms themselves or users’ self-moderation?4. Methodology and initial datasets

We queried CrowdTangle, Meta's data source for Facebook and Instagram data, for both generic as well as controversial keywords and hashtags on Facebook. We queried Twitter (the academic API via the 4cat software) for generic and controversial hashtags, and Telegram (4cat) to locate controversial channels. For Twitter, we performed a two-step query design. From the first set, a hashtag frequency list was collated, and the top ten ‘missing’ hashtags from the original set were added to the hashtag / keyword list and queried anew. The data are for the period from 24 February to 28 March, 2022, or the first month after the start of the 2022 Russian invasion of Ukraine. For each dataset (Facebook, Twitter, Telegram) a word tree was generated via 4cat (for a list of 60 words). Controversial narratives were then marked and checked with the original database (for context or possible citations). Subsequently, we labelled and divided the most engaged messages into seven thematic areas (historical massacres, disease, etc.). We then looked into the accounts sharing such content. We analysed the actors' online identity by verifying their accounts by checking the platforms' own verification signals (when the account was created and who registered it), and subsequently their coordination behaviour by plotting networks of links that they share. Telegram grants users more privacy by default and is less transparent about its interface metrics, so we were not as able to verify the actors’ identities, concentrating instead on the authenticity of their coordination behaviour. We looked for signs of artificial activity such as frequency of posting, externally linked profiles, domain registration data of the shared links, and languages in which these actors were posting. These provide important clues for investigating the motivation of these actors. Depending on the platform we found a range of different types of engagement, motivation and methods of persuasion.5. Findings

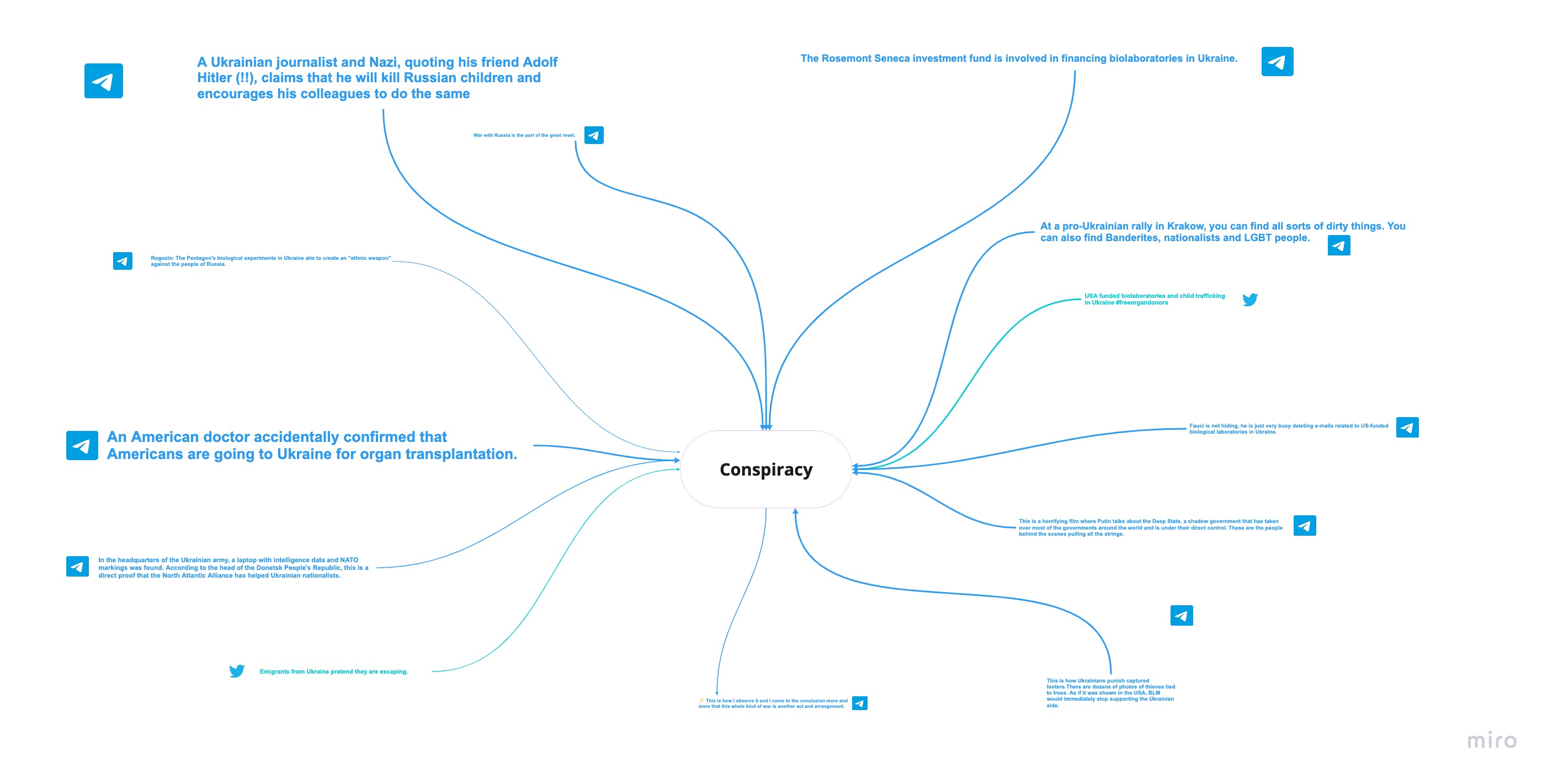

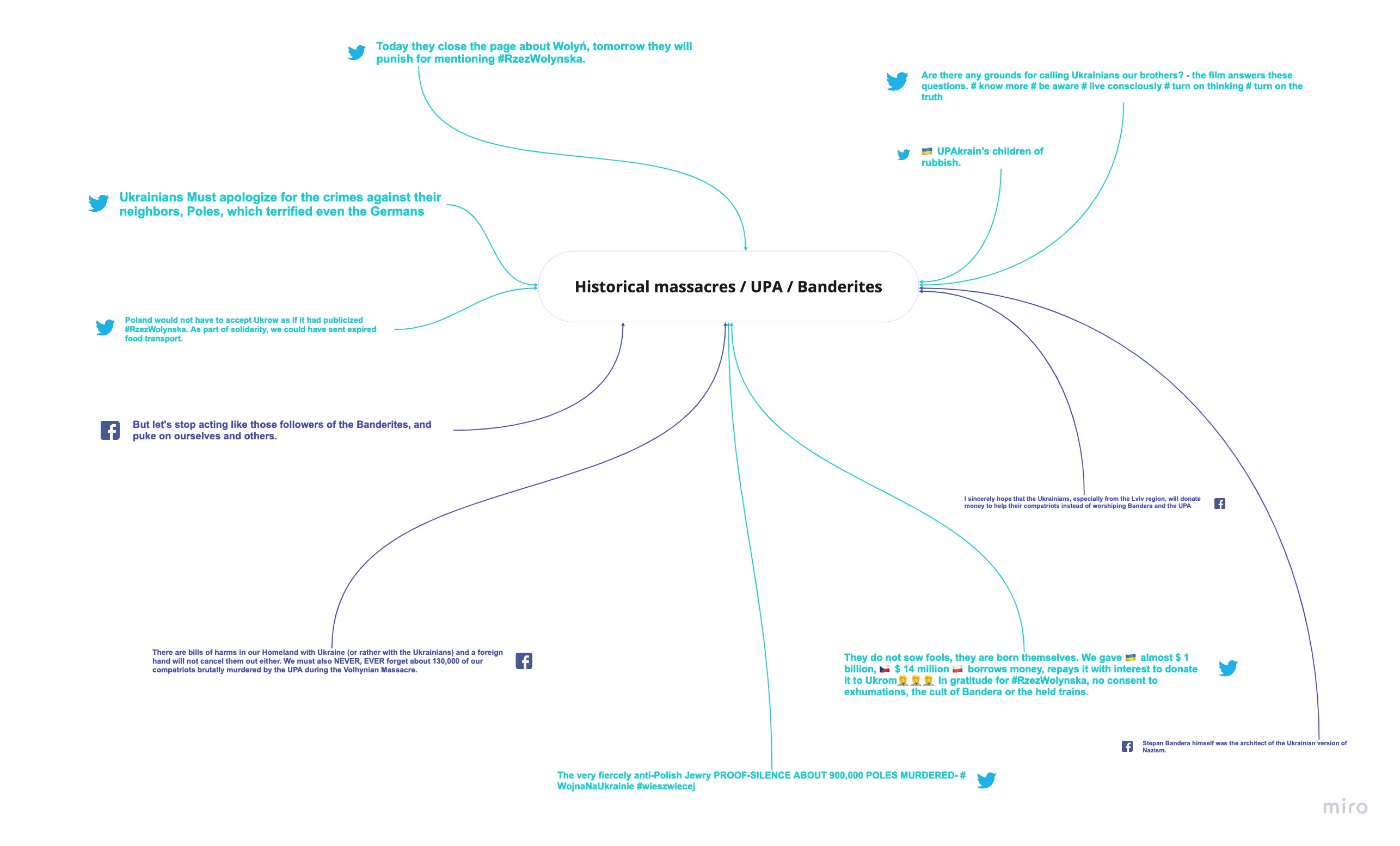

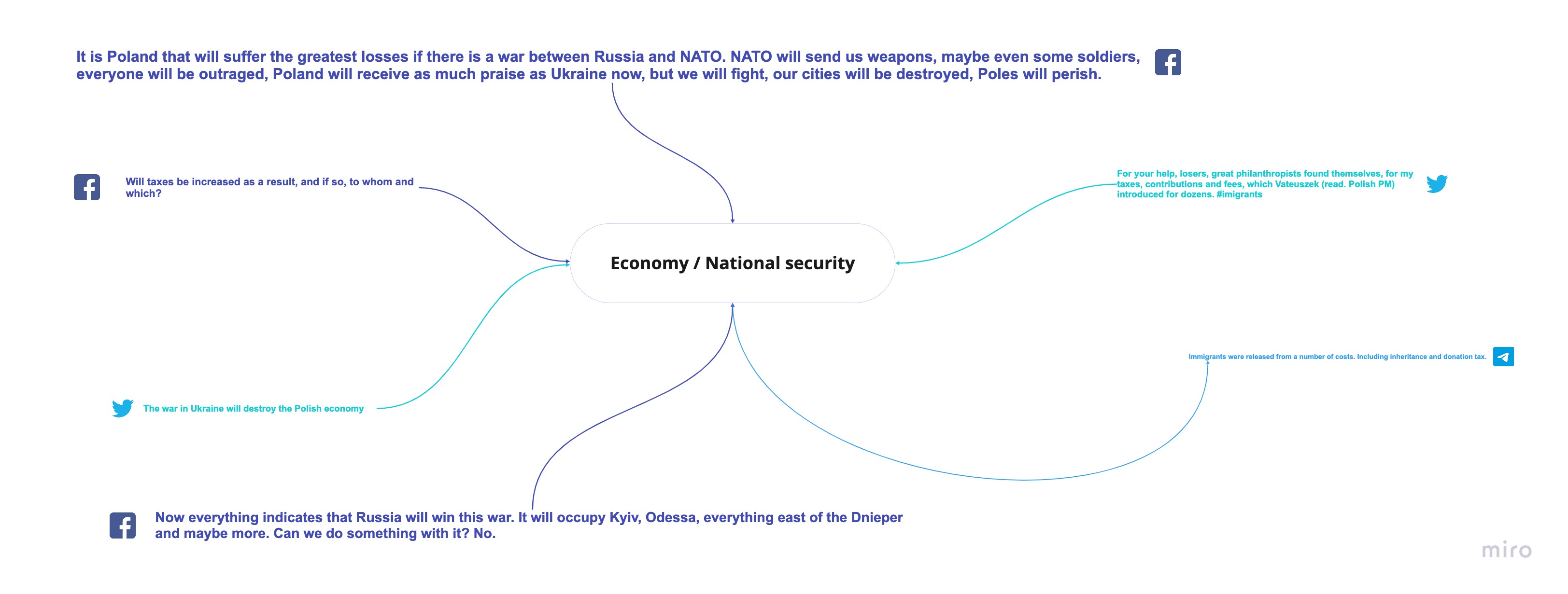

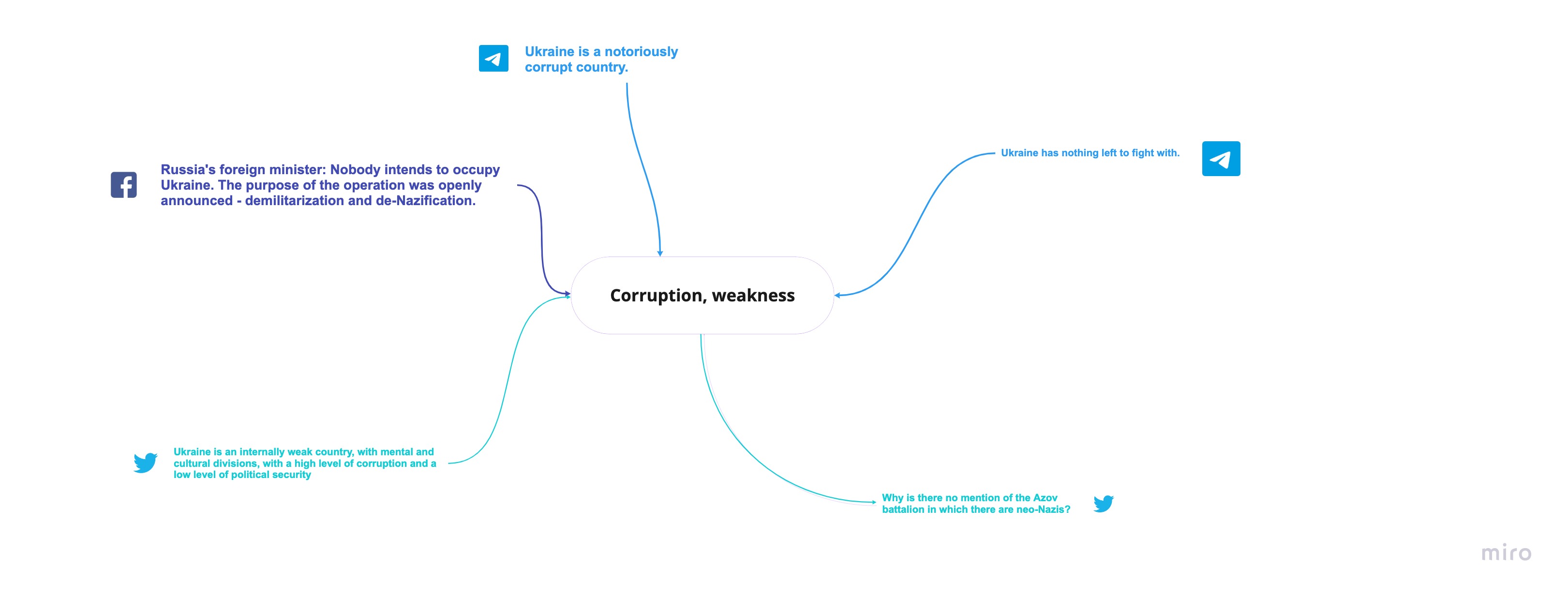

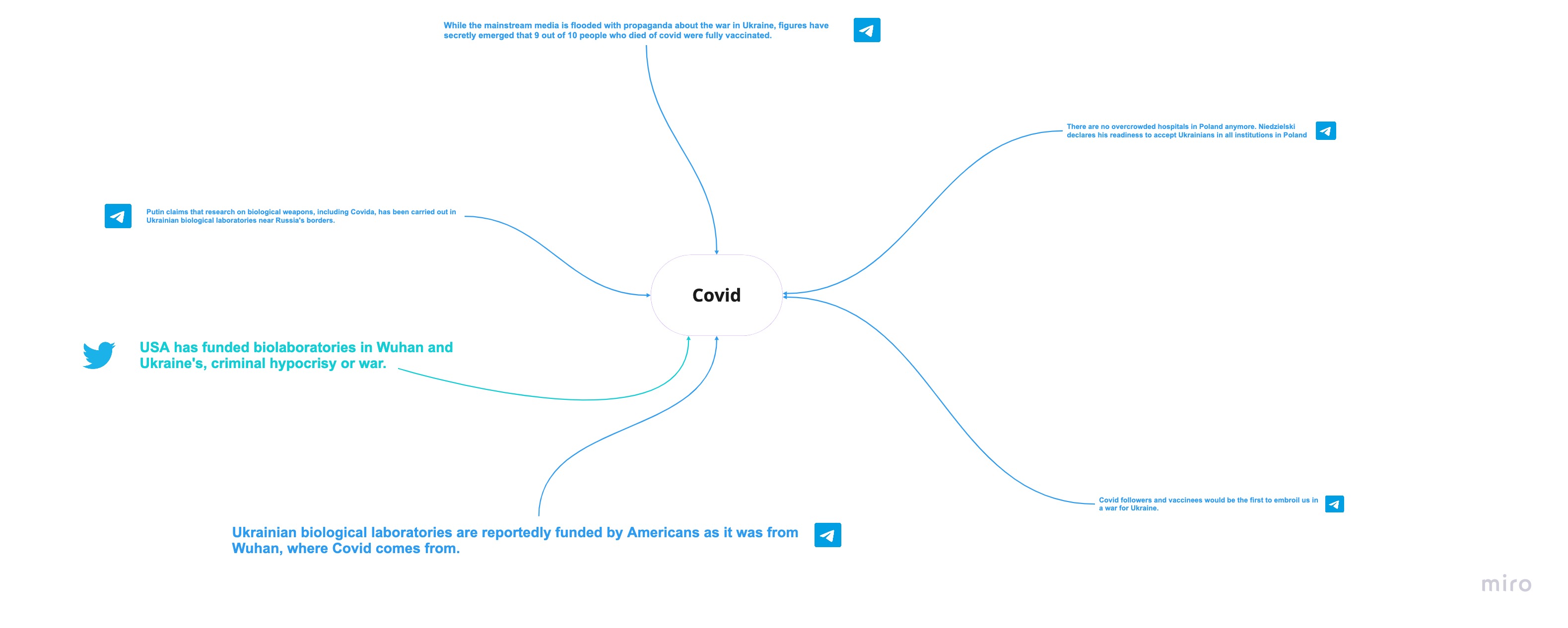

1. Narratives As stated above, at the onset of the war, there were at least seven distinctive narratives surrounding the conflict. All of them were present in the sample of 11 public Telegram channels that we analysed (fikcja, infowarpl, konfederacjapoland, konfederacjapolski, koronaskandalpl, NajNewsy, NiezaleznyM1, ukrainarosja, wolna_polska, wolni_ludzie). Thematic areas are less diverse on Facebook and Twitter, however, as communities there discuss fewer topics surrounding the conflict (mainly historical massacres, diseases, and refugees). The most prominent narratives on Telegram are conspiracy theories. They borrow from the other problematic thematic areas, but have an agenda complementary to spreading disinformation - a one that erodes the quality of conversation around the Russian invasion or tries to enforce destabilising behaviours. Through such narratives, some Telegram users are encouraging people to withdraw large sums of money, buy excessive amounts of petrol, and refrain from following updates on the war from mainstream media. The most prominent conspiracy theories on Telegram concern the presence of biolabs in Ukraine. These are supposedly U.S.-run or otherwise associated with the U.S. and connected to child trafficking and spread of new variants of Coronavirus. They also are allegedly no longer under the control of the Ukrainians. Other theories try to find a link between the outbreak of Coronavirus pandemic and the war, blame Ukrainians for fabricating the invasion or hurting their own civilians in order to gain attention from the West. Such theories are usually inserted into long messages that contradict spontaneous messaging patterns of other users and are often aided with links to external “informational” content and imagery. Most of the time, conspiracy theories are posted anonymously, but we also saw suspicious profiles with generic aliases posting extensively. For these accounts, a network analysis was performed using their linking profiles that we discuss in detail in the Networks section. If the conversations on Telegram have users discussing conspiracy, the Twitter accounts are predominantly posting about historical massacres with references to Ukrainian nationalism and the UPA, the historical nationalist paramilitary group. Accounts sharing material about historical massacres on Twitter, however, have few retweets or otherwise do not receive much attention. When content shares are considered, the most popular controversial narratives appear to be those related to current threats rather than past issues such as those embedded in stories about diseases and refugees. On Twitter some users are also keen to link other diseases with the presence of Ukrainian refugees in Poland. Ukrainians allegedly spread Covid-19, measles, drug-resistant tuberculosis, and HIV. Messages on Twitter are characterised by a reliance on concepts and catch-phrases. This may be due to the characteristics of the platform (limitation of 280 characters), but also due to an attempt to encourage users to open a link offered by actors who spread controversial narratives. Some of the narratives also include conspiracy theories. Much like on Twitter, most of the controversial narratives found on Facebook are preying on the problematic Polish-Ukrainian history to deny legitimacy to helping Ukrainian refugees, whether in a form of social programs or through ordinary citizens. Some Facebook users, such as Leszek Samborski, are going as far as to say that Ukranians, especially from Lviv, should use financial aid that they have been given to help their compatriots rather than openly support “Bander and the UPA” (the historical nationalist paramilitary group) or call Ukrainians “resettlers”. The accounts that have the biggest reach and prioritised discussions on problematic topics at the time of a nation-wide mobilisation to help Ukraine were of right-wing politicians - Sławomir Mentzen ('Poland will receive as much praise as Ukraine now, but if we will fight, our cities will be destroyed, and Poles will perish'), Grzegorz Braun ('will taxes be increased as a result, and if so, to whom and which?'), and Jacek Wilk ('We must also NEVER, EVER forget about 130,000 of our compatriots brutally murdered by the UPA during the Volhynian Massacre'). These politicians are known to use basic economic and national defence theories to argue their case; in our sample we saw them using rhetoric to refrain from helping Ukraine. Fig.1 Conspiracy Theories narrative cluster (data sampled from Telegram, Twitter, Facebook, date range: 24 February to 28 March, 2022)

Fig.1 Conspiracy Theories narrative cluster (data sampled from Telegram, Twitter, Facebook, date range: 24 February to 28 March, 2022)

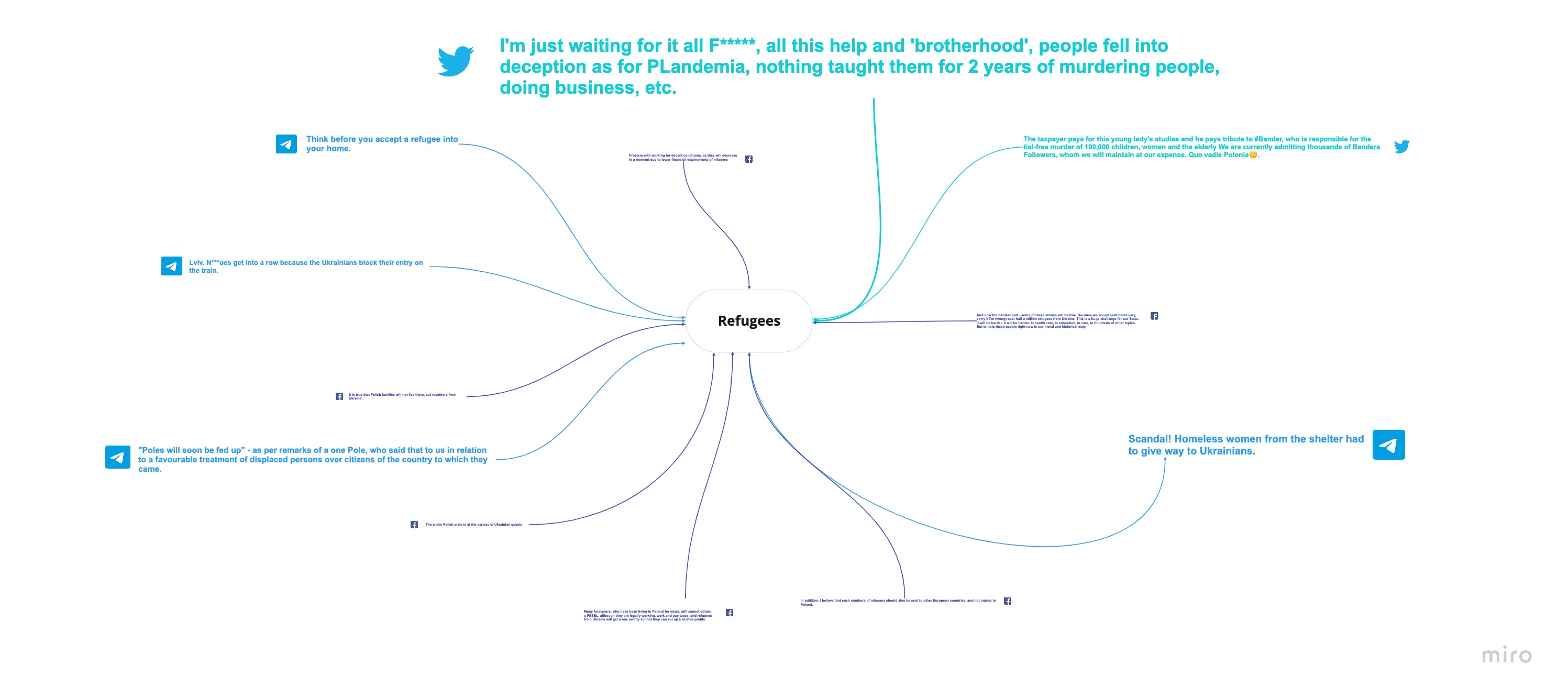

Fig.2 Refugees narrative cluster (data sampled from Telegram, Twitter, Facebook, date range: 24 February to 28 March, 2022)

Fig.2 Refugees narrative cluster (data sampled from Telegram, Twitter, Facebook, date range: 24 February to 28 March, 2022)

Fig.3 Diseases narrative cluster (data sampled from Telegram, Twitter, Facebook, date range: 24 February to 28 March, 2022)

Fig.3 Diseases narrative cluster (data sampled from Telegram, Twitter, Facebook, date range: 24 February to 28 March, 2022)

Fig.4 Historical massacres/ UPA/ Banderites narrative cluster (data sampled from Telegram, Twitter, Facebook, date range: 24 February to 28 March, 2022)

Fig.4 Historical massacres/ UPA/ Banderites narrative cluster (data sampled from Telegram, Twitter, Facebook, date range: 24 February to 28 March, 2022)

Fig.5 Economy / national security narrative cluster (data sampled from Telegram, Twitter, Facebook, date range: 24 February to 28 March, 2022)

Fig.5 Economy / national security narrative cluster (data sampled from Telegram, Twitter, Facebook, date range: 24 February to 28 March, 2022)

Fig.6 Corruption, weakness narrative cluster (data sampled from Telegram, Twitter, Facebook, date range: 24 February to 28 March, 2022)

Fig.6 Corruption, weakness narrative cluster (data sampled from Telegram, Twitter, Facebook, date range: 24 February to 28 March, 2022)

Fig.7 Covid narrative cluster (data sampled from Telegram, Twitter, Facebook, date range: 24 February to 28 March, 2022)

2. Actors

Having established the most frequently shared controversial narratives (per platform), we sought to verify those responsible for disseminating such content and inquire into their online behaviour. Specifically, we are interested in the online identity of important nodes who intentionally inject conspiracy theories and disinformation into online debates.

Using textual analysis in the form of word trees, we singled out a number of Telegram personal accounts from our sample that spread conspiracy theories across all analysed channels. We then checked if there was anything unusual about the frequency with which these accounts were posting. We filtered out the top 20 most active, non-anonymous accounts by the number of messages they posted, which together amounted to over six million messages. Among these 20 most active accounts, we also saw those sharing controversial narratives.

We observed that some Telegram accounts are not only posting frequently but also in different languages. Since there have been press accounts about possible malicious involvement of foreign actors, we thought it was important to verify their command of the Polish language.

Among the accounts that were flagged as suspicious, five forwarded or wrote messages in languages other than Polish: Z***ia, E***a, T***da, A***el, and G***rd. It must be noted, however, the accounts were often flagged as using foreing languages because they forwarded a lot of content from other Telegram accounts run in languages other than Polish. All these accounts, with an exception of one account that was only posting in German, showed a good command of Polish.

All these accounts are actively sharing conspiracy and hateful messages, sometimes with links to domains registered in Russia. They seem to favour forwarding messages from others or their closed channels, rather than posting themselves. Indirect posting can be a particular form of Telegram activity, but it is also a clever technique to avoid manual checks on IDs that could be done with Telegram bots such as userinfobot.

In addition, these accounts also seem to understand Russian well, and find content in Russian useful to explain their agendas. We also saw that some of these accounts were deleted later in time such as Z***ia and A***el. Checking most active Telegram accounts, and verifying their language skills, and messaging patterns allowed us to make initial assumptions about their motifs, as we observed that actors, especially in the Grupa Sympatyków Konfederacji and Konfederacja PL channels appear to amplify such content. The accounts we found suspicious on Telegram show patterns of synchronised sharing. They shared similar content, mostly video content on Youtube and links to a blog section of a controversial legal advisory website - legaartis.

On Twitter, narratives about Ukrainian nationalism and UPA but also financial issues related to Poland’s willingness to accept refugees are published primarily by conservative accounts that share many posts about historical topics. Almost all accounts publishing controversial narratives were conservative and alt-right in nature, and link to news sites that are problematic (such as legaartis). The most active and retweeted account belongs to a defence journalist - Jaroslaw Wolski. Other accounts with high number of retweets belong to users with conservative sentiments such as a pro-life adovates or amateurs of Polish history, and historical memory who publish from a nationalist angle, oftentimes using derogatory language. None of these accounts, however, was guilty of sharing controversial narratives that we discovered with word trees. The problematic narratives in our sample were primarily shared by one account, who although active, has few followers and most of his retweets come from one person. His posts discuss narratives that fit into all seven of the clusters we distinguished (refugees, historical massacres, national and economic insecurity, diseases, corruption, coronavirus and conspiracy theories).

In the top 20 most active accounts on Facebook from our sample, we have not seen any non-mainstream, problematic accounts that would not be known to the public. The biggest reach on Facebook was attained by the right-wing politician - Sławomir Mentzen (109,056 total interactions) whose network prowess in relative terms has generated almost the same number of interactions as the Polish high-profile magazine OKO.press (116, 384 interactions) in the same time-frame. Accounts that primarily publish narratives about Ukrainian nationalism and the UPA on Facebook are - Ukrainians are not my brother's account together with a few right-wing historical portals. Wróżbita Maciej (Fortune teller Maciej) primarily posts about tax increases and economic problems due to increased presences of Ukrainian refugees in Poland, as did Leszek Samborski. Michal Urbaniak, on the other hand, publishes posts about the weakness and corruption of Ukraine that is in line with the Sputnik narrative.

The Facebook account that was the most alarming in our sample was Ukrainiec nie jest moim bratem, which openly encouraged hateful feelings towards Ukrainians. This account’s reach however was minuscule compared to other Facebook users from our sample, as it only had 2,726 total impressions, with content shares not exceeding 166 times. In the final stages of this research we have also learnt that the account administrator of this group (a primary school teacher) had been named and is believed to be referred to the prosecutor by the Sosnowiec city council.

A sample of problematic accounts sourced from Facebook along with controversial narratives they have shared appear innocent in comparison to content sourced from Telegram. They also pale in comparison to some press accounts about the involvement of potential malicious actors on the platform. The six narratives present on Facebook came from discussions mostly held by a mix of internet personalities whom were involved in online culture wars but we believe were not foreign such as: right-wing and populist politicians, conspiracy theorists, influencers, professional news outlets, amateur historians, nuclear energy researchers, novelists, well-known NGOs, satirical news sites, travel and lifestyle bloggers, debunking non-profit organisations and celebrities.

In addition, we have also gathered evidence that some of these actors are involved in activity that we described as organic pushback, by which they openly dispute incorrect or deliberately misleading stories concerning the Russian invasion of Ukraine and the exodus of Ukrainian refugees. Interestingly, these pushbacks are almost proportional to controversial narratives, by which we mean they debunk the most prominent anti-Ukrainian narratives on Facebook, such as economic or national security concerns. For example, Własny punkt widzenia writes: 'At the moment, most of the refugees who came to us are women with children, and there is hardly any desire in them to size the eastern parts of Poland as far as the Vistula line, as some people draw it in their fantasies'. Disputed, too, are arguments against helping Ukrainian refugees on the basis of historical massacres. As Spotted Tarnów writes: 'Stop judging people through the prism of their great-grandparents' actions. Poland wasn't a saint either. The above points are a message for people who fell victim to Russian trolls and disinformation'.

The pushback could be an effect of an exhaustive educational campaign aimed at alerting people in Poland about Russian disinformation, combined with efforts by Meta to curb problematic content. Last but not least, auxiliary initiatives from the private sector that turned passive social-media users into active players in debunking misinformation, such as the zglostrolla.pl (for Twitter and Facebook in particular), might have also helped to clean up problematic content from the platform. It should be said, however, that in our Telegram data we have seen a number of links directed to Facebook and Instagram Stories. So when we observe that there is less problematic content with visible engagement on Facebook than on Telegram we refer to written content.

3. Networks

Fig.7 Covid narrative cluster (data sampled from Telegram, Twitter, Facebook, date range: 24 February to 28 March, 2022)

2. Actors

Having established the most frequently shared controversial narratives (per platform), we sought to verify those responsible for disseminating such content and inquire into their online behaviour. Specifically, we are interested in the online identity of important nodes who intentionally inject conspiracy theories and disinformation into online debates.

Using textual analysis in the form of word trees, we singled out a number of Telegram personal accounts from our sample that spread conspiracy theories across all analysed channels. We then checked if there was anything unusual about the frequency with which these accounts were posting. We filtered out the top 20 most active, non-anonymous accounts by the number of messages they posted, which together amounted to over six million messages. Among these 20 most active accounts, we also saw those sharing controversial narratives.

We observed that some Telegram accounts are not only posting frequently but also in different languages. Since there have been press accounts about possible malicious involvement of foreign actors, we thought it was important to verify their command of the Polish language.

Among the accounts that were flagged as suspicious, five forwarded or wrote messages in languages other than Polish: Z***ia, E***a, T***da, A***el, and G***rd. It must be noted, however, the accounts were often flagged as using foreing languages because they forwarded a lot of content from other Telegram accounts run in languages other than Polish. All these accounts, with an exception of one account that was only posting in German, showed a good command of Polish.

All these accounts are actively sharing conspiracy and hateful messages, sometimes with links to domains registered in Russia. They seem to favour forwarding messages from others or their closed channels, rather than posting themselves. Indirect posting can be a particular form of Telegram activity, but it is also a clever technique to avoid manual checks on IDs that could be done with Telegram bots such as userinfobot.

In addition, these accounts also seem to understand Russian well, and find content in Russian useful to explain their agendas. We also saw that some of these accounts were deleted later in time such as Z***ia and A***el. Checking most active Telegram accounts, and verifying their language skills, and messaging patterns allowed us to make initial assumptions about their motifs, as we observed that actors, especially in the Grupa Sympatyków Konfederacji and Konfederacja PL channels appear to amplify such content. The accounts we found suspicious on Telegram show patterns of synchronised sharing. They shared similar content, mostly video content on Youtube and links to a blog section of a controversial legal advisory website - legaartis.

On Twitter, narratives about Ukrainian nationalism and UPA but also financial issues related to Poland’s willingness to accept refugees are published primarily by conservative accounts that share many posts about historical topics. Almost all accounts publishing controversial narratives were conservative and alt-right in nature, and link to news sites that are problematic (such as legaartis). The most active and retweeted account belongs to a defence journalist - Jaroslaw Wolski. Other accounts with high number of retweets belong to users with conservative sentiments such as a pro-life adovates or amateurs of Polish history, and historical memory who publish from a nationalist angle, oftentimes using derogatory language. None of these accounts, however, was guilty of sharing controversial narratives that we discovered with word trees. The problematic narratives in our sample were primarily shared by one account, who although active, has few followers and most of his retweets come from one person. His posts discuss narratives that fit into all seven of the clusters we distinguished (refugees, historical massacres, national and economic insecurity, diseases, corruption, coronavirus and conspiracy theories).

In the top 20 most active accounts on Facebook from our sample, we have not seen any non-mainstream, problematic accounts that would not be known to the public. The biggest reach on Facebook was attained by the right-wing politician - Sławomir Mentzen (109,056 total interactions) whose network prowess in relative terms has generated almost the same number of interactions as the Polish high-profile magazine OKO.press (116, 384 interactions) in the same time-frame. Accounts that primarily publish narratives about Ukrainian nationalism and the UPA on Facebook are - Ukrainians are not my brother's account together with a few right-wing historical portals. Wróżbita Maciej (Fortune teller Maciej) primarily posts about tax increases and economic problems due to increased presences of Ukrainian refugees in Poland, as did Leszek Samborski. Michal Urbaniak, on the other hand, publishes posts about the weakness and corruption of Ukraine that is in line with the Sputnik narrative.

The Facebook account that was the most alarming in our sample was Ukrainiec nie jest moim bratem, which openly encouraged hateful feelings towards Ukrainians. This account’s reach however was minuscule compared to other Facebook users from our sample, as it only had 2,726 total impressions, with content shares not exceeding 166 times. In the final stages of this research we have also learnt that the account administrator of this group (a primary school teacher) had been named and is believed to be referred to the prosecutor by the Sosnowiec city council.

A sample of problematic accounts sourced from Facebook along with controversial narratives they have shared appear innocent in comparison to content sourced from Telegram. They also pale in comparison to some press accounts about the involvement of potential malicious actors on the platform. The six narratives present on Facebook came from discussions mostly held by a mix of internet personalities whom were involved in online culture wars but we believe were not foreign such as: right-wing and populist politicians, conspiracy theorists, influencers, professional news outlets, amateur historians, nuclear energy researchers, novelists, well-known NGOs, satirical news sites, travel and lifestyle bloggers, debunking non-profit organisations and celebrities.

In addition, we have also gathered evidence that some of these actors are involved in activity that we described as organic pushback, by which they openly dispute incorrect or deliberately misleading stories concerning the Russian invasion of Ukraine and the exodus of Ukrainian refugees. Interestingly, these pushbacks are almost proportional to controversial narratives, by which we mean they debunk the most prominent anti-Ukrainian narratives on Facebook, such as economic or national security concerns. For example, Własny punkt widzenia writes: 'At the moment, most of the refugees who came to us are women with children, and there is hardly any desire in them to size the eastern parts of Poland as far as the Vistula line, as some people draw it in their fantasies'. Disputed, too, are arguments against helping Ukrainian refugees on the basis of historical massacres. As Spotted Tarnów writes: 'Stop judging people through the prism of their great-grandparents' actions. Poland wasn't a saint either. The above points are a message for people who fell victim to Russian trolls and disinformation'.

The pushback could be an effect of an exhaustive educational campaign aimed at alerting people in Poland about Russian disinformation, combined with efforts by Meta to curb problematic content. Last but not least, auxiliary initiatives from the private sector that turned passive social-media users into active players in debunking misinformation, such as the zglostrolla.pl (for Twitter and Facebook in particular), might have also helped to clean up problematic content from the platform. It should be said, however, that in our Telegram data we have seen a number of links directed to Facebook and Instagram Stories. So when we observe that there is less problematic content with visible engagement on Facebook than on Telegram we refer to written content.

3. Networks

Fig.8 Network visualization created with Gephi: analysis of actors and external links they shared on Telegram.

To better understand the context in which these controversial narratives appear, we also looked into the type of external URLa these actors were sharing. External links show the sources that are referenced, the extent to which posts reference the same or similar sources and whether there are clusters of accounts and (particularly problematic) sources, which then invites further scrutiny of the accounts.

To do that we plotted a network of all non-anonymous (at the time of the research), and non-empty messages from Telegram with external links in them (2,891 in total). We have shortened each link to the domain only, and for links to social media posts or for links pointing to video sharing platforms we used the generic domain names (all youtube links were grouped as youtube.com and all facebook posts as facebook.com). We then run the modularity algorithm to detect groups in Gephi (gephi.org). Nodes were also weighed and then sized based on out-degree ranking measures (so we know which nodes link most frequently within each cluster). (ForceAtlas2 and Label Adjust were used to layout the graph.)

For network analysis on Twitter and Facebook we used CoreText (www.cortext.net), where we used network creation from the collected data. We analysed both links provided by the accounts we highlighted that published controversial narratives and links published across the database. Looking just at the linking activity of actors involved in messaging on the channels that were analysed, we could see that some were much more active when referencing other sources, and used cross-sharing techniques (forwarding messages rather than writing themselves).

In all, through the use of modularity analysis we could subdivide the network into the following clusters:

Pink nodes – posting frequently but providing a much low number of external links

Green nodes – posting a large number of links, encouraging people to check out articles, Youtube accounts, other groups on Facebook or Telegram, linking frequently to legaartis.pl

*Blue nodes – suspicious accounts but different activity than Pink, Green or Raspberry clusters

Raspberry pink nodes – suspicious accounts but different activity than Pink, Green, or Blue clusters

Actors from the "Green" cluster were inviting people to watch Youtube videos, read articles and blog posts or branch out to Facebook groups and stories, as well as to other Telegram channels (Fig.1). That put them in direct opposition to frequent messengers from the “Pink'' cluster who, although were posting more frequently, shared relatively less linked content (hence their scant visibility in the Fig.1 graph) and when they did, the sources were less varied than for the “Greens''. Blue and Raspberry Pink accounts were posting less frequently to different suspicious external content, but we couldn’t independently label them as superspreaders.

This finding was a first step in establishing that active engagement of some of the participants in these channels was due to the casual nature of their conversations, and did not revolve around comprehensive “knowledge-sharing” as it did in the case of the “Green” cluster. That allowed us to refine our focus on actors who not only were spreading controversial narratives, but were also involved in frequent messaging with links to misleading, hateful or polarising content.

Accounts belonging to the “Green” cluster were most often linking to videos on video sharing platforms such as Youtube (168 in total) and Rumble (29 in total). These were often videos disputing the authenticity of the war itself, those blaming the Polish PM for providing the “Nazi” Azov battalion with Polish military uniforms, or discusing the presence of biolabs in Ukraine. Some of these videos are interviews with a QAnon, self-proclaimed expert on a range of difficult or scientific topics - Zygfryd Ciupka.

The second most popular citation was the blog section of a supposed legal advisory website - legaartis.pl (273 in total) that itself engages in peddling controversial narratives around the Russo-Ukrainian war. The website was flagged before by multiple fact-checking units of media outlets in Poland, such as Konkret24.pl.

The rest of their linking choices were a combination of posts from blogging sites such as Wordpress and Blogspot (38 in total) and social media content from Facebook (31 in total), Twitter (24 in total) and Tiktok (20 in total). A lot of their external links were pointing to other channels on Telegram (181 in total) of which some had messages only in Russian (nastikagroup, atodoneck), German (fufmedia, bleibtstark, NeuzeitNachrichten) or English (QNewsOfficialTV, SGTNewsNetwork, LauraAbolichannel). Importantly, when informational websites were considered, these accounts linked most often to registered in Russia sputniknews.com (27 in total) and only then to sensationalist, right-wing news portals with domains registered in Poland such as nczas.com (12 in total), medianarodowe.com (12 in total), dorzeczy.pl (7 in total), magnapolonia.pl, (8 in total). The “Green” accounts linked 9 times to stronazycia.pl, an anti-abortion website that doesn’t disclose its DNS information.

A large number of domains to which the “Green'' cluster was linking had domains registered in Poland (387), of which most links were to the blog section of the problematic legaartis.pl website (273).

The remaining external links to PL domains led to right-wing, conservative sites - nczas.com, medianarodowe.com, magnapolonia.org, dorzeczy.pl, naszapolska.pl, tvp.info, narodowcy.net.

There were also a number of links from addresses registered in the United States (65), and Russian Federation (42) as well as Canada -- mostly driven by the links to the video sharing platform Rumble.com - Canada (34). The most linked U.S. registered domains were alt tech platforms as bitchute.com and alternative news site beckernews.com but also a petition website created by a Polish Christian Culture Association on 24.02.2022 - brondlapolakow.pl, that pressures Polish government to simplify gun ownership regulations in the wake of the Russian-Ukrainian war. As for domains registered in .ru, the most often shared were links to sputniknews.com (27) and the German translation of the RT website (4). For 60 domains we were not able to find any registry data from WHOis database, and 6 domains had their addresses protected.

On Twitter, actors who publish controversial narratives link primarily to other Twitter accounts (which also share similar narratives) and to the Youtube platform. The account from which the greatest number of controversial narratives spreads (despite a small number of retweets and followers) also links to the greatest number of external links, primarily to Youtube (see Visualisation 3). This account particularly often shares links to the "ARIOWIE '' channel that revolves around corona-sceptic narratives and alternative medicine as well as to the “ARMAGEDON” channel, which publishes videos of an actor, who publishes extreme right-wing content - Wojciech Olszański, acting under the pseudonym Aleksander Jabłonowski. Olszański is a corona-sceptic and openly supports the policies of Vladimir Putin and Alexander Lukashenko. He actively participates in events organised by right-wing circles in Poland.

Following the logic of network analysis on Telegram, on Twitter we can also distinguish clusters. The light-green cluster is mainly one account that posts often with a large number of external links (mainly to Youtube or other Twitter accounts). The other colours are the remaining accounts, which mainly post text messages, occasionally linking to external sources.

Fig.8 Network visualization created with Gephi: analysis of actors and external links they shared on Telegram.

To better understand the context in which these controversial narratives appear, we also looked into the type of external URLa these actors were sharing. External links show the sources that are referenced, the extent to which posts reference the same or similar sources and whether there are clusters of accounts and (particularly problematic) sources, which then invites further scrutiny of the accounts.

To do that we plotted a network of all non-anonymous (at the time of the research), and non-empty messages from Telegram with external links in them (2,891 in total). We have shortened each link to the domain only, and for links to social media posts or for links pointing to video sharing platforms we used the generic domain names (all youtube links were grouped as youtube.com and all facebook posts as facebook.com). We then run the modularity algorithm to detect groups in Gephi (gephi.org). Nodes were also weighed and then sized based on out-degree ranking measures (so we know which nodes link most frequently within each cluster). (ForceAtlas2 and Label Adjust were used to layout the graph.)

For network analysis on Twitter and Facebook we used CoreText (www.cortext.net), where we used network creation from the collected data. We analysed both links provided by the accounts we highlighted that published controversial narratives and links published across the database. Looking just at the linking activity of actors involved in messaging on the channels that were analysed, we could see that some were much more active when referencing other sources, and used cross-sharing techniques (forwarding messages rather than writing themselves).

In all, through the use of modularity analysis we could subdivide the network into the following clusters:

Pink nodes – posting frequently but providing a much low number of external links

Green nodes – posting a large number of links, encouraging people to check out articles, Youtube accounts, other groups on Facebook or Telegram, linking frequently to legaartis.pl

*Blue nodes – suspicious accounts but different activity than Pink, Green or Raspberry clusters

Raspberry pink nodes – suspicious accounts but different activity than Pink, Green, or Blue clusters

Actors from the "Green" cluster were inviting people to watch Youtube videos, read articles and blog posts or branch out to Facebook groups and stories, as well as to other Telegram channels (Fig.1). That put them in direct opposition to frequent messengers from the “Pink'' cluster who, although were posting more frequently, shared relatively less linked content (hence their scant visibility in the Fig.1 graph) and when they did, the sources were less varied than for the “Greens''. Blue and Raspberry Pink accounts were posting less frequently to different suspicious external content, but we couldn’t independently label them as superspreaders.

This finding was a first step in establishing that active engagement of some of the participants in these channels was due to the casual nature of their conversations, and did not revolve around comprehensive “knowledge-sharing” as it did in the case of the “Green” cluster. That allowed us to refine our focus on actors who not only were spreading controversial narratives, but were also involved in frequent messaging with links to misleading, hateful or polarising content.

Accounts belonging to the “Green” cluster were most often linking to videos on video sharing platforms such as Youtube (168 in total) and Rumble (29 in total). These were often videos disputing the authenticity of the war itself, those blaming the Polish PM for providing the “Nazi” Azov battalion with Polish military uniforms, or discusing the presence of biolabs in Ukraine. Some of these videos are interviews with a QAnon, self-proclaimed expert on a range of difficult or scientific topics - Zygfryd Ciupka.

The second most popular citation was the blog section of a supposed legal advisory website - legaartis.pl (273 in total) that itself engages in peddling controversial narratives around the Russo-Ukrainian war. The website was flagged before by multiple fact-checking units of media outlets in Poland, such as Konkret24.pl.

The rest of their linking choices were a combination of posts from blogging sites such as Wordpress and Blogspot (38 in total) and social media content from Facebook (31 in total), Twitter (24 in total) and Tiktok (20 in total). A lot of their external links were pointing to other channels on Telegram (181 in total) of which some had messages only in Russian (nastikagroup, atodoneck), German (fufmedia, bleibtstark, NeuzeitNachrichten) or English (QNewsOfficialTV, SGTNewsNetwork, LauraAbolichannel). Importantly, when informational websites were considered, these accounts linked most often to registered in Russia sputniknews.com (27 in total) and only then to sensationalist, right-wing news portals with domains registered in Poland such as nczas.com (12 in total), medianarodowe.com (12 in total), dorzeczy.pl (7 in total), magnapolonia.pl, (8 in total). The “Green” accounts linked 9 times to stronazycia.pl, an anti-abortion website that doesn’t disclose its DNS information.

A large number of domains to which the “Green'' cluster was linking had domains registered in Poland (387), of which most links were to the blog section of the problematic legaartis.pl website (273).

The remaining external links to PL domains led to right-wing, conservative sites - nczas.com, medianarodowe.com, magnapolonia.org, dorzeczy.pl, naszapolska.pl, tvp.info, narodowcy.net.

There were also a number of links from addresses registered in the United States (65), and Russian Federation (42) as well as Canada -- mostly driven by the links to the video sharing platform Rumble.com - Canada (34). The most linked U.S. registered domains were alt tech platforms as bitchute.com and alternative news site beckernews.com but also a petition website created by a Polish Christian Culture Association on 24.02.2022 - brondlapolakow.pl, that pressures Polish government to simplify gun ownership regulations in the wake of the Russian-Ukrainian war. As for domains registered in .ru, the most often shared were links to sputniknews.com (27) and the German translation of the RT website (4). For 60 domains we were not able to find any registry data from WHOis database, and 6 domains had their addresses protected.

On Twitter, actors who publish controversial narratives link primarily to other Twitter accounts (which also share similar narratives) and to the Youtube platform. The account from which the greatest number of controversial narratives spreads (despite a small number of retweets and followers) also links to the greatest number of external links, primarily to Youtube (see Visualisation 3). This account particularly often shares links to the "ARIOWIE '' channel that revolves around corona-sceptic narratives and alternative medicine as well as to the “ARMAGEDON” channel, which publishes videos of an actor, who publishes extreme right-wing content - Wojciech Olszański, acting under the pseudonym Aleksander Jabłonowski. Olszański is a corona-sceptic and openly supports the policies of Vladimir Putin and Alexander Lukashenko. He actively participates in events organised by right-wing circles in Poland.

Following the logic of network analysis on Telegram, on Twitter we can also distinguish clusters. The light-green cluster is mainly one account that posts often with a large number of external links (mainly to Youtube or other Twitter accounts). The other colours are the remaining accounts, which mainly post text messages, occasionally linking to external sources.

6. Conclusions

In line with multiple press accounts, our research has confirmed that the Russian invasion of Ukraine and the exodus of Ukrainian refugees into Poland have prompted much discussion on social media, including the spread of controversial narratives. We used the term 'controversial narratives' because the stories not only visibly incite controversy in Poland but also give us an opportunity to understand other collective phenomena involved around the topic. The most recurring controversial narratives in relation to the war in Ukraine in the Polish language social media sphere are divided into seven areas: refugees, historical massacres, national and economic insecurity, diseases, corruption, coronavirus and conspiracy theories. Each of these areas had different levels of engagement and occurrence depending on the platform studied. The most engaged with controversial narratives were found on Telegram and involved conspiracy theories about fake war, biolabs and active nazi groups from Ukraine. The dominant narrative on Twitter was that of diseases supposedly spread by refugees from Ukraine and the general economic destabilisation related to the war in Ukraine. Facebook narratives, on the other hand, were primarily about historical massacres and economical implications of mass exodus of Ukrainians to Poland, that were mostly shared by right-wing politicians or celebrities with a conservative worldview. We categorized actors disseminating controversial narratives into four groups (1) Superspreaders, who primarily linked to external sources (most often sites with suspect credentials that such as legaartis or blogs run by conspiracy-theorists such as thebabilonempire, or Youtube channels that often share on 'alternative knowledge'). (2) Users who post frequently without referring to external sources, but rather focus on text messages. (3) Internet personalities who have significant social media reach such as right-wing politicians, populists, conspiracy theorists, amateur historians, novelists, professional news outlets, satirical news sites and more, who are participants of internet culture wars. (4) Users who post “organic pushback”. They are users who engage in divisive conversation on social media about the war in order to debunk false claims and alert people not to fall for misleading or disinformation content. As the Telegram network analysis shows, particularly important actors for the spread of controversial narratives are those belonging to the green cluster, that is, actors who rarely post themselves but forward a large number of posts that refer primarily to external URLs of sources difficult to verify. On Telegram, there appears to be an extensive information campaign with disinformation and misinformation about the war and its impacts. A much lesser in scale, prominence of controversial narratives on Facebook and Twitter, might suggest we have found some indicationions of moderation. We also observe a large amount of what we call organic pushback on Facebook, shared by actors confronting controversial narratives.Further analysis is necessary to determine the extent of foreign disinformation campaigning, which could rely on the methodology we adopted in this study for tracking suspicious actors, starting with narratives and following accounts, external links, and links to other platforms. We hope that this mode of analysis, which uses publicly available data (OSINT) and digital methods, will inspire others to use this methodology to study misinformation and problematic narratives that can lead to the discovery of problematic actors and entire networks of problematic content.

7. References

CoreText (www.cortext.net). Coraz więcej fake newsów w sieci. Szokująca liczba prób dezinformacji w Polsce. (2022, March 16). Onet. Retrieved June 20, 2022, from https://wiadomosci.onet.pl/kraj/coraz-wiecej-fake-newsow-szokujaca-liczba-prob-dezinformacji-w-polsce/4vvxrjc. Gephi (gephi.org). Grochot, A. (2022, March 11). Groźne patogeny w ukraińskich laboratoriach? Polecenie WHO i rosyjska dezinformacja. RMF24. Retrieved June 20, 2022, from https://www.rmf24.pl/raporty/raport-wojna-z-rosja/news-grozne-patogeny-w-ukrainskich-laboratoriach-polecenie-who-i-,nId,5886097#crp_state=1. Kowalski, J. (2022, March 16). 10 tys. profili w social media rozpowszechnia w Polsce rosyjską dezinformację, od kilku dni są hiperaktywne. Wirtualnemedia. Retrieved June 20, 2022, from https://www.wirtualnemedia.pl/artykul/wzmozona-aktywnosc-dezinformacyjnych-kont-polski-internet-jakie-narracje-jak-to-dziala. Wpisy o “przestępstwach uchodźców” na terenie Polski - dezinformacja z prorosyjskich kont. (2022, March 10). Konkret24. Retrieved June 20, 2022, from https://konkret24.tvn24.pl/polska,108/wpisy-o-przestepstwach-uchodzcow-na-terenie-polski-dezinformacja-z-prorosyjskich-kont,1098811.html. Copyright © by the contributing authors. All material on this collaboration platform is the property of the contributing authors.

Copyright © by the contributing authors. All material on this collaboration platform is the property of the contributing authors. Ideas, requests, problems regarding Foswiki? Send feedback