You are here: Foswiki>Dmi Web>SummerSchool2018>SummerSchool2018AppStoresBias>SummerSchool2018AppStoresBiasObjectionableQueries (12 Jan 2019, FernandoVanDerVlist)Edit Attach

Objectionable Queries

Searching for Porn in App StoresTeam Members

Lead: Anne Helmond, Fernando van der Vlist, Esther Weltevrede (alphabetical) Participants: Christina Meyenburg Dall Christensen, Emanuela Blaiotta, Janna Joceli Omena, Maggie MacDonald, Shefali Bharati, and Stephanie de Smale (alphabetical)Contents

1. Introduction

The app stores have explicitly laid out a few guidelines for what can and cannot be on the platform. Content like weapons building, torture and explicit sexual acts are expressly forbidden. These so called “banned apps” contain themes such as gambling, pornography, weapon or explicit violence content. We set out to explore what replaces the objectionable query and the biases that might lie in the Google Play App Store. For our project, we wanted to examine what recommendations are offered when users try to look for objectionable content, or content that does not exist. We looked specifically in to pornography because this theme fell into the “banned” category. We therefore wanted to see what the stores would recommend/redirect to if we searched for a term that we knew wouldn't be there. We found it interesting to investigate the nature of recommendation and redirections for apps, and to map the potential biases. These findings may contribute to further insights and investigations such as the patterns shared between banned apps, the position of platforms as gatekeepers and the developers tactics of circumventing the algorithm design. (see our final presentation here)2. Initial Data Sets

- Link data set - Link klipper for scraping for links of Apps according to the queries of the first data set. We retrieved unique App IDs.

- Similar App data set - Network of Google Play SImilar Apps resulted from unique ID´s. This contained the; Link ID, Title, Category, Rating, price, Link, Developer Name, Developer link, image source, long description, related to

3. Research Questions

- When users search for content that is explicitly banned under the developer distribution agreement or developer guidelines, what does the app store offer as recommendations?

- What does this recommender system say about the organizational logic of the store?

4. Methodology

- Our methodology seeks to find the Apps related to our specific query, the recommendation network system and the relations between the apps. The starting point of our project was designing a query, resulting in the query words “porn” and “pornography”. “Porn” was searched as country VPN specific query for the following countries: Italy, Brazil, Canada, India, Denmark, Netherlands. “Pornography”, instead, was a language specific query (Pornografia (Italian), pornografia (Portuguese), Pornography (English), Pornografi (Danish), अश्लील साहित्य (Hindi)).

- We were interested to see the visuals of the App logos as our subject is potentially graphic. ‘DownThem All’ was a tool used to obtain the logos of the apps and visualised through ImagePlot.

- The tool Link Klipper was used to obtain all the URLs for the apps resulting from the query. The output was saved as a .csv file and consequently the IDs were produced by filtering “Text to Column” using the “=” sign. -These IDs were, then, fed into the DMI tool Google Play Similar Apps to obtain similar applications, directed by the following conditions: “full app details” - “No”, “Language” - “Regional Language”.

- These results were, then, saved as a gephi network file “.gefx” and a “.csv” file. This was done in order to map the relations and type clusters of the similar application system. This network was visualized in Gephi by using the “modularity class” function, which looks for the nodes that are more densely connected together than to the rest of the network, for further categorical coding and analysis.

- In addition, we wanted to look at the presence of the different classes created in Gephi. Therefore we coded the modularity classes according to the content of the apps within each class, e.g. “video platforms”, “roleplay”, “social contact” etc. Then we visualised the number of records for each coded category and visualize them in Tableau through a bubble clustering, representing the number of record (size) and the categories (colour and label). This was then saved as a png.

- In order to better understand the recommendation logic of Google Play Store, we relied also on the technique of visual network exploration.

5. Findings

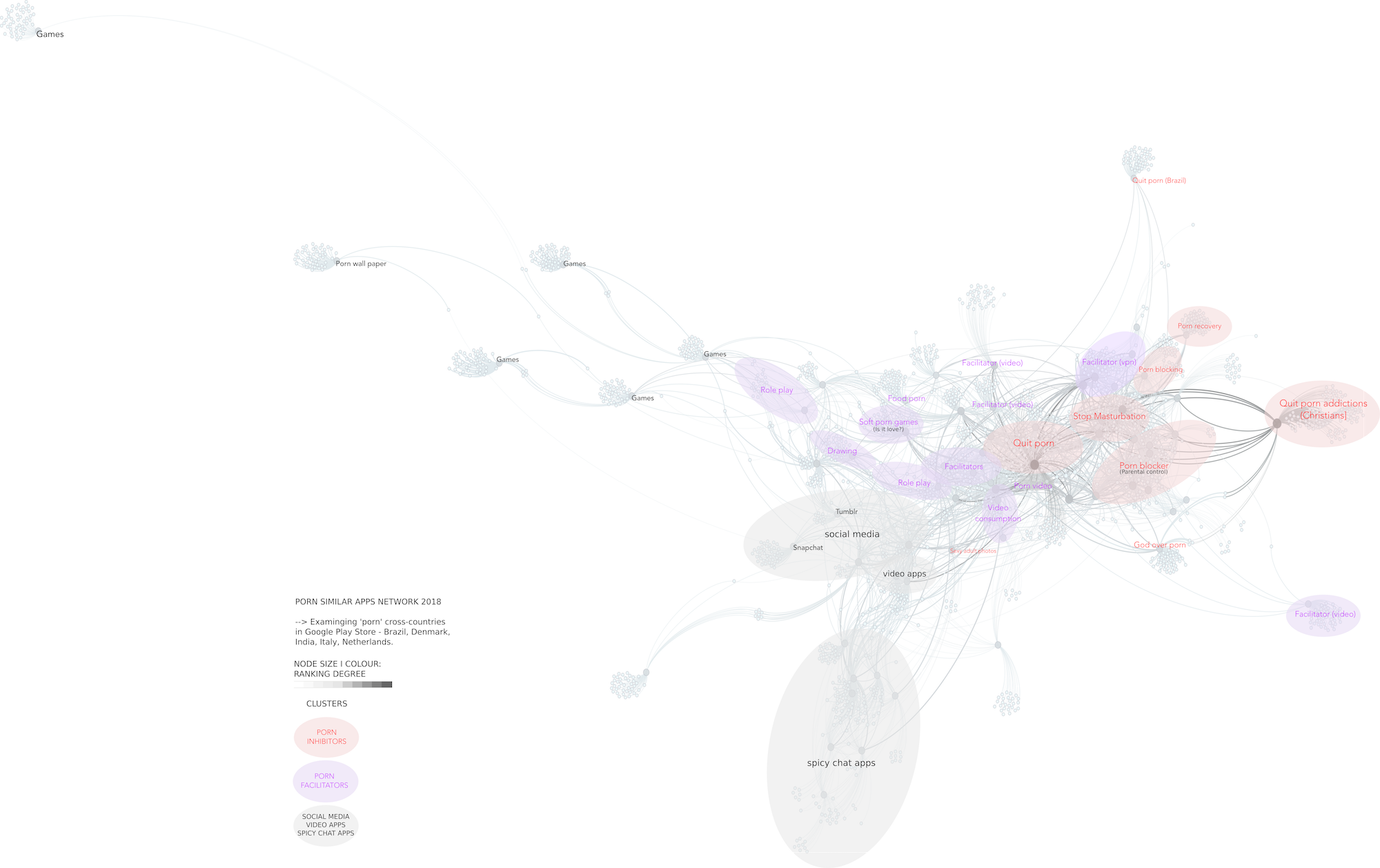

We found various categories among some of which highlighted as “Porn Blocker”, “parental control” etc. We also saw that the search results did not vary that differently when searching for “porn” with different VPN ids. On the other hand it varied a lot when we searched for “pornography” in the 6 different languages. From the Porn similar Apps network, and through the affordances allowed by Force Atlas 2 (layout algorithm available in gephi), we could see how app stores algorithms can organise practices (and not only content). We identified three layers of recommendations: 1. First layer recommendation: Anti-porn apps with explicit references to porn in the name (Stop porn addiction) 2. Second layer recommendation: Hidden porn facilitators. These apps cannot and do not explicitly mention their porn relatedness. 3. Third layer recommendation: non-porn results recommended alternatives related to a key node to the primary findings (other games, other social media, other biblical apps, other apps in a similar language) Google Play Store app similary network for [porn] apps

Why do we see clusters that facilitate porn implicitly, and why do we see social media? (see image). Google’s “discovery algorithm”/ similar apps algorithm increasingly focuses on user engagement. Not only normal ones (download, rating) but also engagement time spent on app, how often it is opened, if it is uninstalled, if it crashes. This is why Tumblr and Snapchat, Tinder, are listed as top apps. The reference to specific social media platforms (Tumblr, Snapchat) is not arbitrary, but are connected to more explicit porn facilitators. For instance BIGO LIVE, a platform to facilitate streaming and watching porn.

Google Play Store app similary network for [porn] apps

Why do we see clusters that facilitate porn implicitly, and why do we see social media? (see image). Google’s “discovery algorithm”/ similar apps algorithm increasingly focuses on user engagement. Not only normal ones (download, rating) but also engagement time spent on app, how often it is opened, if it is uninstalled, if it crashes. This is why Tumblr and Snapchat, Tinder, are listed as top apps. The reference to specific social media platforms (Tumblr, Snapchat) is not arbitrary, but are connected to more explicit porn facilitators. For instance BIGO LIVE, a platform to facilitate streaming and watching porn.

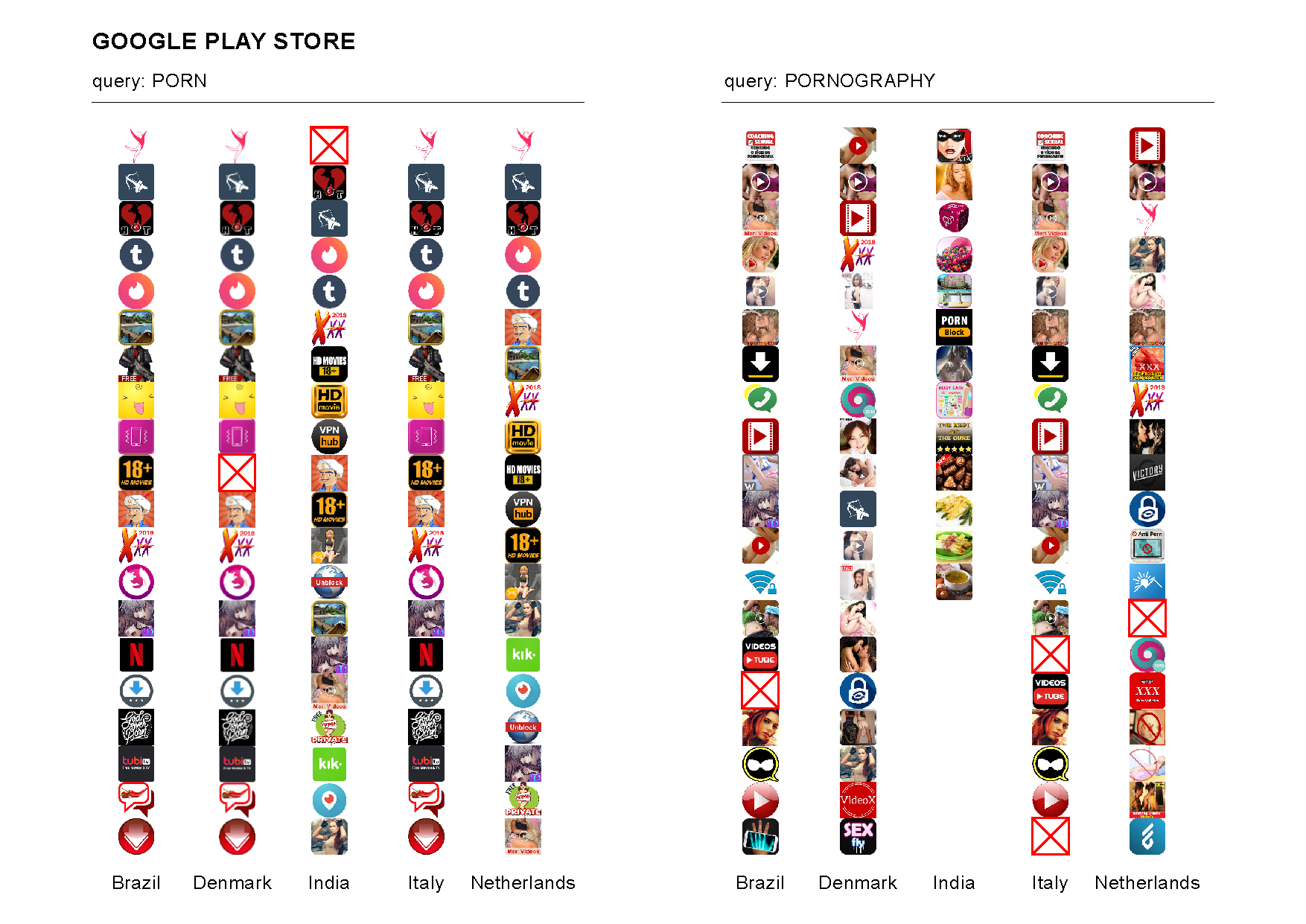

Top 20 results for the queries [porn] and [pornography per country]

From the tableau visualisation the categories with appeared the most as the recommendation/redirections were “video platforms” and “live video chat”. In the image analysis, we could see the top results of the apps and see the apps had few explicit photos and were mostly illustrations and other “non pronographic”. We could also see the top result for all cases were about limiting porn and stopping the addiction. Querying the term ‘porn’ returned pretty homogenous results across locations - the top two are christian anti-pornography aides, otherwise many VPN and streaming services but none pornographic. The local language results included lots of clickbait imagery where the content is not actually pornographic and facilitators for watching porn (VPN’s)

Top 20 results for the queries [porn] and [pornography per country]

From the tableau visualisation the categories with appeared the most as the recommendation/redirections were “video platforms” and “live video chat”. In the image analysis, we could see the top results of the apps and see the apps had few explicit photos and were mostly illustrations and other “non pronographic”. We could also see the top result for all cases were about limiting porn and stopping the addiction. Querying the term ‘porn’ returned pretty homogenous results across locations - the top two are christian anti-pornography aides, otherwise many VPN and streaming services but none pornographic. The local language results included lots of clickbait imagery where the content is not actually pornographic and facilitators for watching porn (VPN’s)

6. Discussion

Our finding demonstrates, most essentially the redirection of attention towards content that either discourages pornography use or covertly encourages it. This creates an implicit rather than explicit anti pornography infrastructure. Considering Google Play Store does not permit porn apps, the logic and network of recommendations is multi layered, with thematic clusters for each.- First layer: Anti-porn apps with explicit references to porn (stop masturbation, stop porn addiction, christian porn addictions) (because porn is in the name)

- Second layer recommendation: Hidden porn facilitators (because they are are related to the practices around porn usage on smartphone, but because of terms of google play store cannot explicitly mention their porn relatedness

- Third layer recommendation: results related to the primary findings (other games, other social media, other biblical apps, other apps in a similar language)

7. Conclusions

Our findings are a way to understand the recommendation/redirecting algorithm in app stores and to analyse what content is recommended when searching for a “banned” term. Thereby the nature of redirecting has as far as “porn” go a vast amount of either porn facilitating apps or Porn inhibiting apps. From these findings there would be several further recommendations for future research. Another interesting finding would be to search for the patterns shared between banned apps. This could be themes such as “weapon”, “violence”, “torture” and “gambling”. Then based on the recommendations/redirections we could see if the redirects are containing some of the same apps and/or themes. On top of this there is the element of the platforms, the app stores, functioning as gatekeepers for the users. It could be interesting to see what there limitations are and to what extent they uphold these limitations. In here also lies the notion about the biases the gatekeepers might have and how they can be shown through search results and missing search results. And with this potential bias and the “power” they possess there is the question of how regulatory they are and how regulatory the users thinks they are/should be. This insight could give us a way of understanding the stores space and its black box. Last but not least the findings we made could open up the creation of a “manual” for the developers to know how to “escape” or get around the restrictions of the app stores. This could be interesting to investigate in order to find the loopholes or see if there is a small community of banned apps who are getting by in the app store because of the restrictions loopholes.8. References

https://android-developers.googleblog.com/2017/02/welcome-to-google-developer-day-at-game.html https://android-developers.googleblog.com/2017/08/how-were-helping-people-find-quality.html https://android-developers.googleblog.com/2018/06/improving-discovery-of-quality-apps-and.html| I | Attachment | Action | Size | Date | Who | Comment |

|---|---|---|---|---|---|---|

| |

pornappsimilarnetworkGP.png | manage | 796 K | 08 Jul 2018 - 15:44 | AnneHelmond | Porn apps similar network |

| |

top20_query DEF.png | manage | 749 K | 08 Jul 2018 - 15:43 | AnneHelmond | Top 20 query |

Edit | Attach | Print version | History: r8 < r7 < r6 < r5 | Backlinks | View wiki text | Edit wiki text | More topic actions

Topic revision: r7 - 12 Jan 2019, FernandoVanDerVlist

Copyright © by the contributing authors. All material on this collaboration platform is the property of the contributing authors.

Copyright © by the contributing authors. All material on this collaboration platform is the property of the contributing authors. Ideas, requests, problems regarding Foswiki? Send feedback