You are here: Foswiki>Dmi Web>WebAntenne (16 May 2009, RichardRogers)Edit Attach

webantennE

eParticipation and webantennE

Conventional eParticipation initiatives involve "the use of information and communication technologies to broaden and deepen political participation by enabling citizens to connect with one another and with their elected representatives" ( Wikipedia). Ideally, these will "help foster communication and interaction between politicians and government bodies on the one side, and citizens on the other. Internet, mobile phones and interactive television can be used to channel information to citizens and canvass their views" ( Europe's Information Society). Initial webantennE proposals focused on identifying particular source sets that represent on- and offline localities as well as issue areas, and aggregating or summarizing content for each. Rather than create spaces, portals or social networks to invite citizens' perspectives on any range of topics, the aim was to dynamically discover both issues and issue overlap among sources. Proposed channels included Nederlogosphere, Political Nederlogosphere, Dutch NGO sphere and Local Bloggers. webantennE was completed as Issuefeed.net.Building a source set: A Webantenna use case

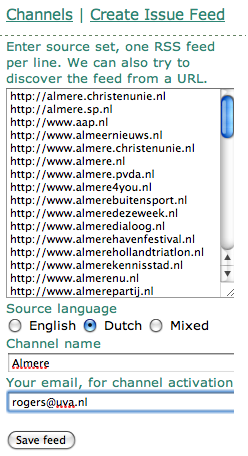

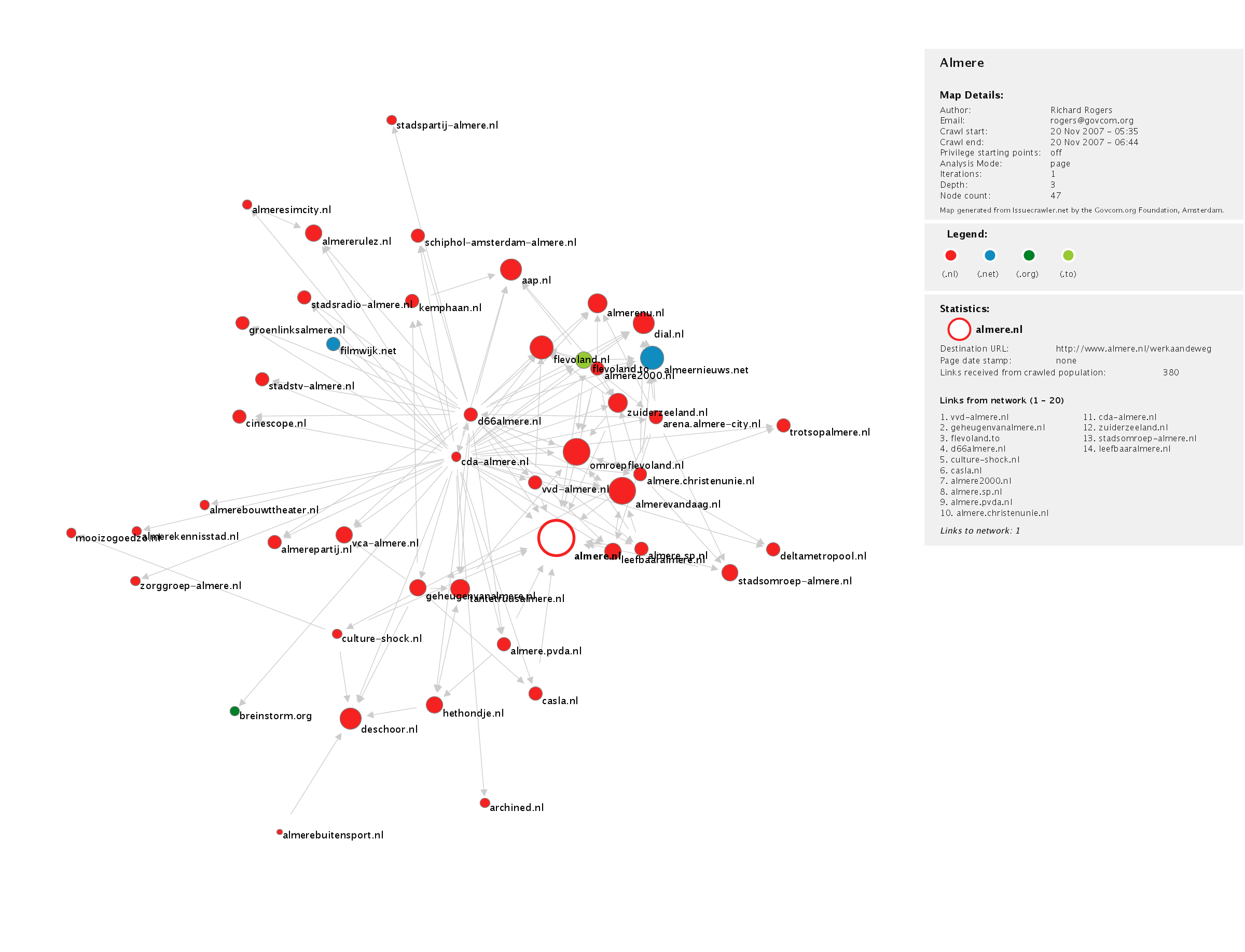

The German chancellor's office, like many governments all over the world, receives a stack of newspapers and magazines early every morning. The staff browse through the papers, and cut out the most important articles. The articles are placed in a newspaper clippings file, and handed to the analysts and the speaker, who together prepare for the press conference later that morning. They anticipate the questions of the day, and prepare answers as well as talking points. The above story illustrates a particular method of collection (all the major newspapers and magazines), and a method of analysis (cutting out the most important articles). One could think of the Issuefeed in terms of a similar practice. These days the question often arises in the same chancellor's office about the major websites. Which websites should be browsed? How does one know a site is important? In order words, it may not be clear to the analysts how to build a list of relevant sites, like the list of relevant newspapers. How to build a source set? The city of Almere, the Netherlands, provided fifteen local URLs that the governmental analysts determined were worth following, in order to have a sense of what issues are arising locally. These Websites included the local political parties, the local news channels, as well as neighborhood groups. How does one know that there are no other significant URLs? The fifteen URLs were placed in the Issue Crawler network location software, which undertook analysis of the Websites' hyperlinks. According to the links out of the sites, many additional relevant sites were found. (The newly discovered sites received links from the original fifteen -- see map below.) The new, longer list was sent back to the city staffmembers, who checked each URL's relevance, e.g., whether the sites were active and concerned Almere. Some were removed. In all a final list was made, listed below. Each URL is entered in Issuefeed.net, and a channel is created. Issuefeed checks each URL for an RSS feed. All RSS feeds are read into the analytical engine, which is a trained POS-tagger that fishes out noun phrases. The phrases occurring during the last week are compared to the previous time period, using the log-likelihood algorithm.

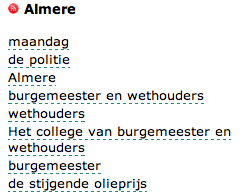

The Issuefeed outputs significant language on the Websites about the city.

Issuefeed checks each URL for an RSS feed. All RSS feeds are read into the analytical engine, which is a trained POS-tagger that fishes out noun phrases. The phrases occurring during the last week are compared to the previous time period, using the log-likelihood algorithm.

The Issuefeed outputs significant language on the Websites about the city.

For a description of the software, see about Issue Feed.

For a description of the software, see about Issue Feed.

almere_top50_20Nov2007.pdf

The new source set. Almere-related Websites, November 2007, inputted into Issue Feed.

http://almere.christenunie.nl

http://almere.sp.nl

http://www.aap.nl

http://www.almeernieuws.nl

http://www.almere.christenunie.nl

http://www.almere.nl

http://www.almere.pvda.nl

http://www.almere4you.nl

http://www.almerebuitensport.nl

http://www.almeredezeweek.nl

http://www.almeredialoog.nl

http://www.almerehavenfestival.nl

http://www.almerehollandtriatlon.nl

http://www.almerekennisstad.nl

http://www.almerenu.nl

http://www.almerepartij.nl

http://www.almerevandaag.nl

http://www.andereoverheid.nl

http://www.anwb.nl

http://www.archined.nl

http://www.architectenwerk.nl

http://www.breinstorm.org

http://www.casla.nl

http://www.cda-almere.nl

http://www.cinescope.nl

http://www.culture-shock.nl

http://www.d66almere.nl

http://www.de-stripheldenbuurt.nl

http://www.deltametropool.nl

http://www.deschoor.nl

http://www.filmwijkalmere.nl

http://www.flevoland.nl

http://www.geheugenvanalmere.nl

http://www.groenlinksalmere.nl

http://www.hethondje.nl

http://www.kemphaan.nl

http://www.koninginnedag4kids.nl

http://www.leefbaaralmere.nl

http://www.mooizogoedzo.nl

http://www.omroepflevoland.nl

http://www.schiphol-amsterdam-almere.nl

http://www.stadscentrum-almere.nl

http://www.stadsomroep-almere.nl

http://www.stadsradio-almere.nl

http://www.stadstv-almere.nl

http://www.tantetruusalmere.nl

http://www.vca-almere.nl

http://www.voedselbank.nl

http://www.vvd-almere.nl

http://www.zorggroep-almere.nl

http://www.zuiderzeeland.nl

almere_top50_20Nov2007.pdf

The new source set. Almere-related Websites, November 2007, inputted into Issue Feed.

http://almere.christenunie.nl

http://almere.sp.nl

http://www.aap.nl

http://www.almeernieuws.nl

http://www.almere.christenunie.nl

http://www.almere.nl

http://www.almere.pvda.nl

http://www.almere4you.nl

http://www.almerebuitensport.nl

http://www.almeredezeweek.nl

http://www.almeredialoog.nl

http://www.almerehavenfestival.nl

http://www.almerehollandtriatlon.nl

http://www.almerekennisstad.nl

http://www.almerenu.nl

http://www.almerepartij.nl

http://www.almerevandaag.nl

http://www.andereoverheid.nl

http://www.anwb.nl

http://www.archined.nl

http://www.architectenwerk.nl

http://www.breinstorm.org

http://www.casla.nl

http://www.cda-almere.nl

http://www.cinescope.nl

http://www.culture-shock.nl

http://www.d66almere.nl

http://www.de-stripheldenbuurt.nl

http://www.deltametropool.nl

http://www.deschoor.nl

http://www.filmwijkalmere.nl

http://www.flevoland.nl

http://www.geheugenvanalmere.nl

http://www.groenlinksalmere.nl

http://www.hethondje.nl

http://www.kemphaan.nl

http://www.koninginnedag4kids.nl

http://www.leefbaaralmere.nl

http://www.mooizogoedzo.nl

http://www.omroepflevoland.nl

http://www.schiphol-amsterdam-almere.nl

http://www.stadscentrum-almere.nl

http://www.stadsomroep-almere.nl

http://www.stadsradio-almere.nl

http://www.stadstv-almere.nl

http://www.tantetruusalmere.nl

http://www.vca-almere.nl

http://www.voedselbank.nl

http://www.vvd-almere.nl

http://www.zorggroep-almere.nl

http://www.zuiderzeeland.nl

Tags: , view all tags

| I | Attachment | Action | Size | Date | Who | Comment |

|---|---|---|---|---|---|---|

| |

almere_URL_list_channel.png | manage | 41 K | 17 Oct 2008 - 14:42 | RichardRogers | |

| |

almere_issues_issuefeed.png | manage | 12 K | 17 Oct 2008 - 14:42 | RichardRogers | |

| |

almere_top50_20Nov2007.pdf | manage | 278 K | 17 Oct 2008 - 14:48 | RichardRogers | Almere Issue Crawler Map - November 2007 |

| |

almere_top50_nov2007.png | manage | 465 K | 17 Oct 2008 - 14:53 | RichardRogers | Almere Issue Crawler Map - November 2007 |

| |

overheid_nl_onderwerpen.jpg | manage | 114 K | 16 Jul 2007 - 12:13 | SabineNiederer | |

| |

samenwerkenaannederland_issues.jpg | manage | 294 K | 16 Jul 2007 - 12:40 | SabineNiederer | |

| |

webantenne.gif | manage | 2 K | 16 Jul 2007 - 10:05 | UnknownUser | Webantenne gif |

Edit | Attach | Print version | History: r19 < r18 < r17 < r16 | Backlinks | View wiki text | Edit wiki text | More topic actions

Topic revision: r19 - 16 May 2009, RichardRogers

Copyright © by the contributing authors. All material on this collaboration platform is the property of the contributing authors.

Copyright © by the contributing authors. All material on this collaboration platform is the property of the contributing authors. Ideas, requests, problems regarding Foswiki? Send feedback