Team members

Amber de Zeeuw - Anne Wijn - Carlo de Gaetano - Chuyun Zhang - Claudia Pazzaglia - Enedina Ortega Gutierrez - Felix Navarrete - Frederike Lichtenstein - Kim Visbeen - Loes Bogers - Lydia Kollyri - Mandy Theel - Mari Fujiwara - Mischa Szpirt - Nikolaj Frøsig - Nora Lauff - Pien Goutier - Sabine Niederer - Sammy Shawky - Sofía Beatriz Alamo. Facilitators: Loes Bogers and Sabine NiedererIntroduction

The pregnancy space on the web is filled with advice on how to get back into your pre-pregnancy shape, how to eat healthy, exercise, stay attractive, what supplements to take, what products to buy, the kind of attitude required from your partner (to have a partner!), and how to be thoroughly relaxed throughout the process. The content of such imagery, as well as their pervasiveness online is contributing to pressures already experienced by people who are trying to expand their family (e.g. advertising targeted to women of a certain age). Previous artistic research done by the Body Recovery Unit (Loes Bogers & Alexandra Joensson) pointed out that these pressures are perceivably present and mothers and midwives confirmed that such pressures are felt and real, and that more inclusively empowering imagery could help shift the “weight” of the burden that much pregnancy imagery currently perpetuates. (See also: http://thebodyrecoveryunit.wordpress.com/)

In this winter school project, initially we aim to understand and make visible current representations of pregnancy. The eventual goal for the project is to start thinking about ways in which a tip-free, and potentially bias-free image set might be curated collaboratively or on an individual basis to empower pregnant women by intervening into algorithmic and online visual cultures around pregnancy. Critical online content analysis can be informative for design questions regarding AI and machine learning. It has been shown that biases creep into artificial intelligence easily (Sinders 2017; Vincent 2016). One type of bias that is relevant in this context is the so called dataset bias (Chou, Murillo & Ibars 2017). If one trains a neural net with a starting data set of content that is too small, has too little diversity or is curated by a team that is not very diverse in gender, race, ability, and other factors it is likely that the algorithm takes on and amplifies such a bias. At the same time, algorithms like the ones used in Google Image Search are learning and giving back to us what a ‘healthy pregnancy’ looks like. UX designer Caroline Sinders has shown the racial bias embedded in such algorithms by demonstrating Google Image search results on professional hair and unprofessional hair (2017). “What’s shown is that mostly caucasian hair is professional, and black hair is almost entirely depicted under ‘unprofessional hair’” (2017). The question, she continues, concerns who has trained the data, and with which data?

In this research project, we will explore the topic of pregnancy across platforms, with the aim to:

-

Understand and make visible the current representation of pregnancy on the web across languages and across platforms;

-

Map out the current pressures and expectations projected onto pregnant women;

-

Explore machine bias: by comparing search engine results for different queries

-

Formulate strategies for research which can be the basis of a more inclusive and diverse "training set" for machine learning.

Research Questions

What are current representations of pregnancy on the web and across platforms?

Which pressures and ideals are projected onto pregnant women?

Does the (visual) language of pregnancy (and pregnancy advice) differ per geographical region, and/or per platform?

What might be strategies to expand a dataset to be more diverse and inclusive? (for AI)

What might be strategies to approach such research with an ethics of care? (Tiidenberg 2018)

Methodology

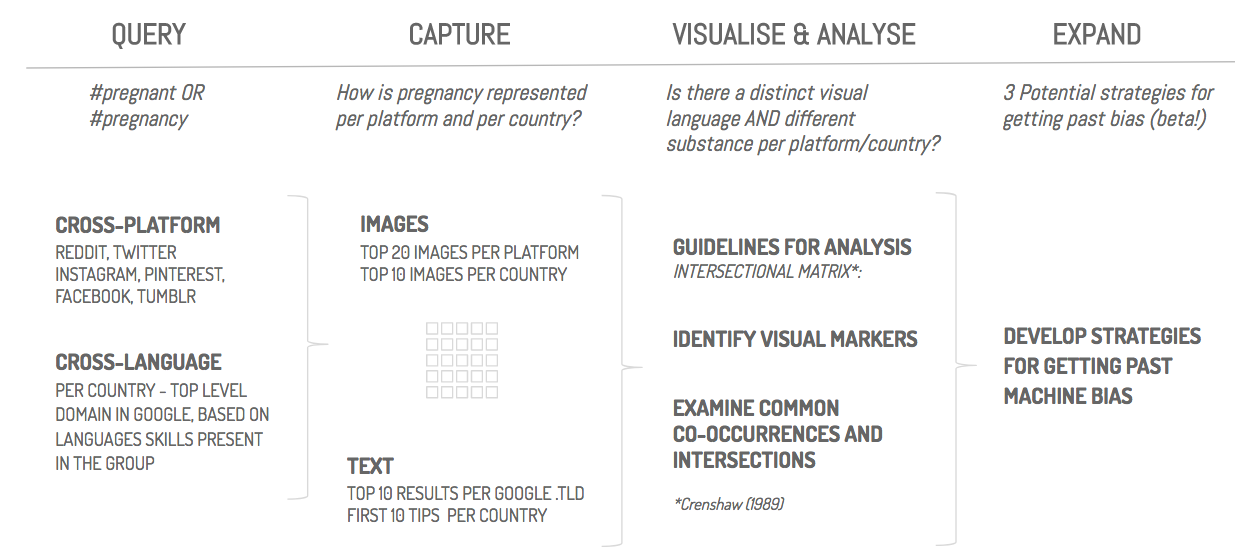

The project consists of two larger case studies: a cross-platform analysis and cross-language analysis, as visualised in the research protocol below.

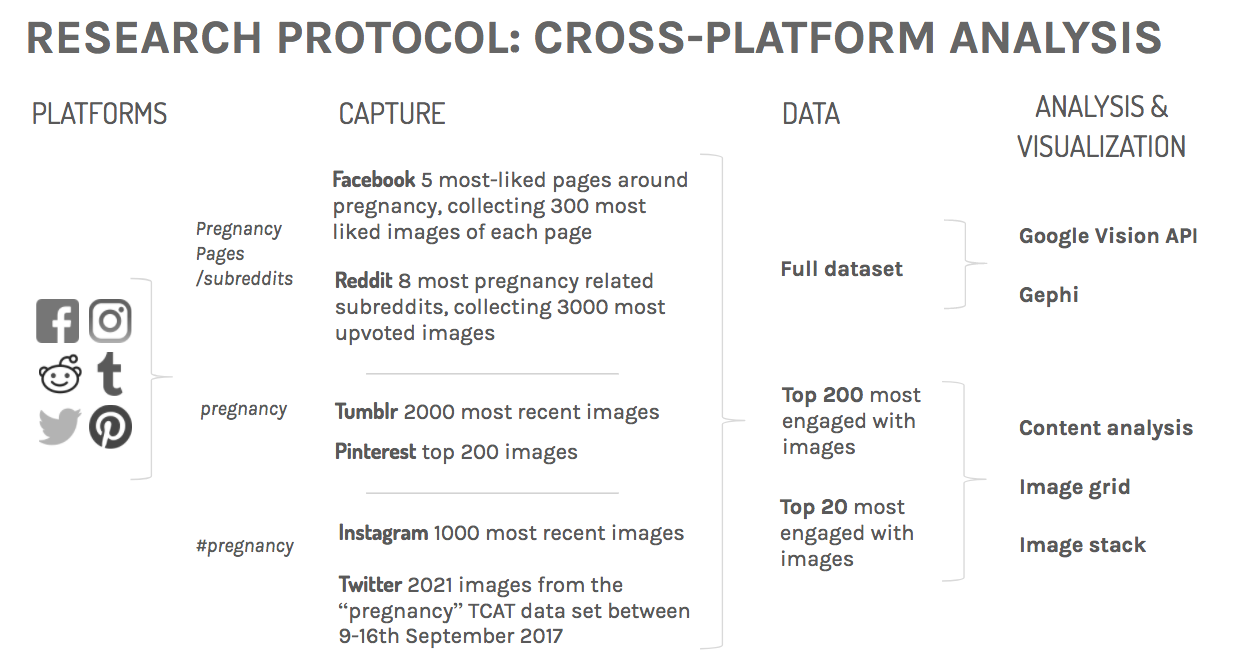

A. Cross-Platform Analysis

Research question

Do different platforms have a distinct visual language for the topic of pregnancy?

Method and data

Findings

<insert Google Vision maps>

<insert content analysis>

Conclusions, summarized:

- Instagram: most (ethnically) diverse

- Facebook: sentimental text

- Pinterest: tips for a picture perfect pregnancy for mostly white women

- (sub-)Reddit: lightweight and fun

- Tumblr: reality check

- Twitter: pregnancy complications

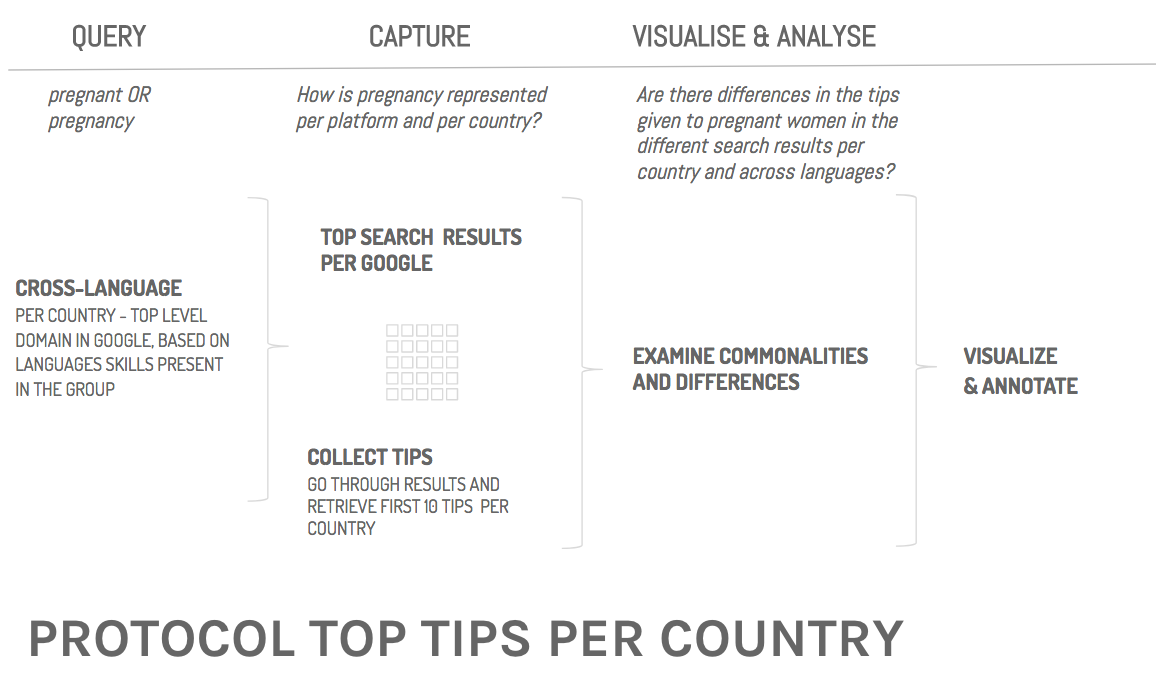

B. Cross-language analysis

Research Question

How is pregnancy represented in different countries/languages?

Preliminary findings:

- similarities are big when countries share the same language

- very particular unique differences per country too (Australia: Do not eat your placenta!)

Method & Data

Data: 27 different Google versions (+ Chinese Baidu).

Query: pregnant OR pregnancy

- Top 10 results, First 10 different tips,

- Top 10 images (pregnancy & “Unwanted”)

Findings

Describe your findings. Consider any counter-intuitive findings.

Conclusion

Discuss and interpret the implications of your findings and make recommendations for future research and application, be it societal, academic or technical (or some combination).

Recommended Readings

Key texts (also available in the reader):

Aiello, G. (2016). Taking Stock. Ethnography Matters. Retrieved from: https://ethnographymatters.net/blog/2016/04/28/taking-stock/

Chou, J., Murillo, O & Ibars, R. (2017) How to recognize exclusion in AI. Medium. 26 September. Retrieved from:

https://medium.com/microsoft-design/how-to-recognize-exclusion-in-ai-ec2d6d89f850

D’Ignazio, C., & Klein, L. F. (2016). Feminist data visualization. In IEEE VIS Conference, Baltimore, October (pp. 23-28). Retrieved from: http://vis4dh.dbvis.de/papers/2016/Feminist%20Data%20Visualization.pdf

Miller, C. (2017). From Sex Object to Gritty Woman: The Evolution of Women in Stock Photos. New York Times. 9 September. Retrieved from: https://www.nytimes.com/2017/09/07/upshot/from-sex-object-to-gritty-woman-the-evolution-of-women-in-stock-photos.html

Further recommended reading:

Levine Beckman, B. (2017, 7 December). The new dad: Fathers swap footballs for tiaras as stock photos evolve. Mashable. Retrieved from: http://mashable.com/2017/12/07/fathers-dads-stock-photos-dadvertising/

Sinders, C. (2017) Design.blog, 23 March, https://design.blog/2017/03/23/caroline-sinders-on-ethical-product-design-for-machine-learning/

Sinders, C. (2017, October). Workshop at SPACE London to collaboratively create a feminist root dataset (including imagery) as agent of change within machine learning systems: http://www.spacestudios.org.uk/art-technology/counting-to-4-0-1-2-3-data-collection-as-art-practice-protest/

Vincent, J. (2016) Twitter taught Microsoft’s AI chatbot to be a racist asshole in less than a day. The Verge. Retrieved from: https://www.theverge.com/2016/3/24/11297050/tay-microsoft-chatbot-racist

Video & podcast:

UX and machine learning designer Caroline Sinders at The AI Conference 2017, especially 2:28-3:47 min on Google image searches for “professional hair”. https://www.youtube.com/watch?v=yfzhe0Ojcdw

Interview with Catherine D’Ignazio on Feminist Data Visualization. DataStori.es. http://datastori.es/109-feminist-data-visualization-with-catherine-dignazio/)

| I | Attachment | Action | Size | Date | Who | Comment |

|---|---|---|---|---|---|---|

| |

Screen Shot 2018-01-31 at 09.55.33.png | manage | 146 K | 31 Jan 2018 - 08:56 | SabineNiederer | project outline |

| |

Screen Shot 2018-01-31 at 10.03.20.png | manage | 180 K | 31 Jan 2018 - 09:05 | SabineNiederer | protocol cross-platform |

| |

Screen Shot 2018-01-31 at 10.03.44.png | manage | 122 K | 31 Jan 2018 - 09:05 | SabineNiederer | protocol top tips |

Copyright © by the contributing authors. All material on this collaboration platform is the property of the contributing authors.

Copyright © by the contributing authors. All material on this collaboration platform is the property of the contributing authors. Ideas, requests, problems regarding Foswiki? Send feedback