You are here: Foswiki>Dmi Web>SummerSchool2007 (17 Dec 2015, UnknownUser)Edit Attach

Digital Methods Summer School 2007: New Objects of Study

2010 | 2009 | 2008 | 2007 How does one do research online? What are the new objects of study, and how do they alter pre-existing methods? These questions provide background to a series of DMI projects listed below, grouped by theme:

How does one do research online? What are the new objects of study, and how do they alter pre-existing methods? These questions provide background to a series of DMI projects listed below, grouped by theme:

Source Distance

On the Web, sources compete to offer the user information. The results of this competition are seen, for instance, in the 'drama' surrounding search engine returns. The focus here is on the prominence of particular sources in different spheres (e.g. blogosphere, news sphere, images), according to different devices (e.g. Google, Technorati, del.icio.us). For example, how far are climate change skeptics from the top of the news? For comparison sake, how far are they from the top of search engine returns? The answer to this and similar 'cross-spherical' inquiries goes a way towards answering the question about the quality of old versus new media. Projects Tools Utilities Resources- Rogers, Richard. Information Politics on the Web. Cambridge, MA: MIT Press (2004). pdf

- Van Couvering, Elizabeth. "New Media? The Political Economy of Internet Search Engines". Presented at the Annual Conference of the International Association of Media & Communications Researchers. Porto Alegre, Brazil, July 25-30, 2004. pdf

- Van Couvering, Elizabeth. "Is Relevance Relevant? Market, Science, and War: Discourses of Search Engine Quality". Journal of Computer Mediated Communication Vol. 12, Issue 3 (2007). http://jcmc.indiana.edu/vol12/issue3/vancouvering.html

Archive

The Web has an ambivalent relationship with time. At one extreme, time is flattened as older, outdated content stands side by side with the new. This is perhaps most apparent in the way some content, whether an interesting statistic or a humorous website, tends to 'resurface' periodically, experienced as new all over again. At the other extreme, there is a drive to be as up-to-date as possible: as epitomized by blogs and RSS feeds. Content producers and consumers may increasingly be said to have a perceived freshness fetish. One aim of the research has been to make temporal relationships on the Web visible. Projects- Space for People: Suggested Fields

- Evaluation of Political Websites in the Netherlands over time

- Palestinian Governmental Websites Before and After Hamas Came to Power

- YouTube website analysis using the Waybackmachine

- Webpage History Generator (currently unsupported)

- Wikipedia Network Analysis Tool

- Webarchivering - KB

- Eerste Hulp bij Website Archivering

- Netarchive.dk collects and preserves the Danish portion of the internet

- http://webarchivist.org/

- Archiving websites. General considerations and strategies by Niels Brügger (The Centre for Internet Research, Aarhus 2005).

Online Communities

Social networking sites such as Myspace, Facebook and Hyves in the Netherlands have stirred anxiety about the public display of the informal. Researchers of social software have concentrated on what especially a non-member - a mother, a prospective boss or a teacher - can see about a person. Here the research concerns how social software has reacted to public concerns, at once allowing and cleansing. In particular attention is paid to what may still be 'scraped' and analysed after media attention has faded. Projects Resources- Nyland, Rob. Social Networking: fertile ground for the branding of youth? Brigham Youth University http://www.gentletyrants.com/wp-content/uploads/2007/03/Nyland_myspace%20branding.pdf

Geo

"There is growing demand for the ability to determine the geographical locations of individual Internet users, in order to enforce the laws of a particular jurisdiction, target advertising, or ensure that a website pops up in the right language. These two separate challenges have spawned the development of clever tricks to obscure the physical location of data, and to determine the physical location of users—neither of which would be needed if the Internet truly meant the end of the tyranny of geography." http://www.yale.edu/lawweb/jbalkin/telecom/puttingitinitsplace.htmlIntroduction

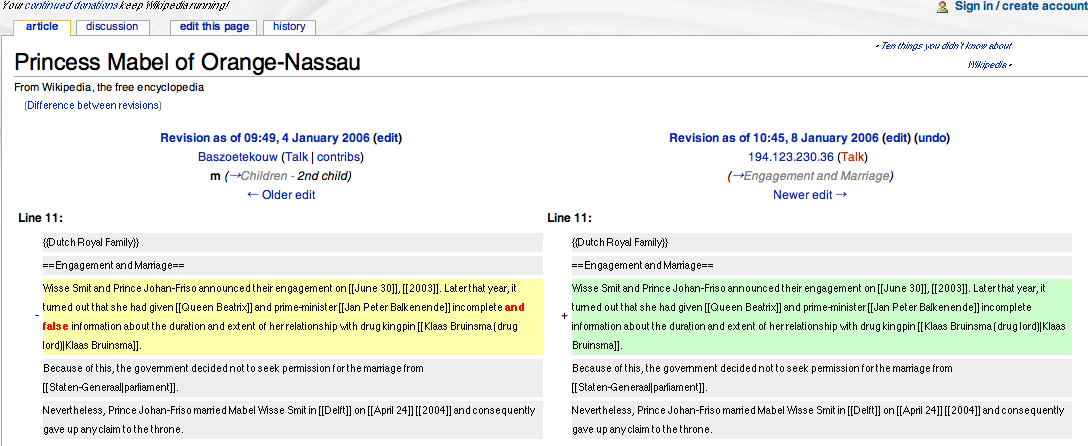

What's it like to live in a global village? On the 28th of August 2007, the Dutch newspaper NRC Handelsblad announced that Dutch Princess Mabel and her husband Prince Johan Friso edited a Wikipedia entry about a 2003 scandal that forced the prince to renounce his claim to the throne. The royal couple took out the reference that Princess Mabel had given “incomplete and false information” to the government, changing it into only “incomplete information”. Wikipedia shows the timestamp and IP-address for every edit made. The IP-address of the computer turned out to be located at the Royal Palace of Queen Beatrix. The NRC used the Wikipedia Scanner to locate the editors. With the Wiki Scanner, which was developed by Virgil Griffith and released on August 14, 2007, one is able to often find the actual names and addresses of the editors. The wikiscanner disaggregates the collective and de-anonymizes geo-locatable authors. Rishab Aiyer Ghosh and Vipul Ved Prakash were among the first to disaggregate the ‘many minds’ collaborating in the open software movement (Ghosh & Prakash, 2000). Their conclusion was that ”free software development is less a bazaar of several developers involved in several projects, more a collation of projects developed single-mindedly by a large number of authors”. In the open source movement, people turned out to be all working on their own projects. Very few people were actually collaborating in developing software. In editing the entry about their own controversial family matters, the Royal family showed similar behavior. What may have come as a shock to the newspaper readers is common on the Web. The practice of web-forensics, of finding out ‘who did it’, is otherwise described by Richard Rogers and Noortje Marres as ‘tracing’ and ‘rubbing’ (Marres & Rogers, 1999). ‘Tracers’ look for traces “that reveal patterns of behaviour and eventually ‘preferences’”, whereas ‘rubbers’ look for meaning given not by the surfer routings, but by webmasters' markings, i.e., the text and hyperlinks on the sites they administer. “Rubbing the linkage of websites treating a particular topic, they aim to reveal potential routings through a thematic network given by the web. The 'Global Positioning System' network, for example, discloses aspects that are currently defining the phenomenon (navigation, us military, consumer product, terrorist asset, environmental monitoring), as well as the relations between some of the more fascinating players”. The Wiki Scanner in this context could be considered a rubbing and tracing device, lifting the wikipedia surface to uncloak the anonymous writers and reveal their traces. The global village turns out to be exactly that: a village, where it's hard to remain anonymous amongst the tracers and rubbers. The edited entry in Wikipedia

The edited entry in Wikipedia

Geo-location

Referred to as the 'global village' (a term Mcluhan used to describe electronic mass media), the Web has been advertised as a space for globalization. Internet browsers (with telling names such as ‘Internet Explorer’ or ‘Safari’) offer part of the equipment one needs to navigate the unknown. With the revenge of geography, there is a renewed interest for the geo-location of various online objects, such as the user, the network, the issue, or the data. In digital studies, ‘location’ can refer to various things. The question “Is it big in Japan?”, would normally have been asked in reference to fans, based on local sales data. Nowadays the quantity of fans could conceivably be measured differently. For any given song or YouTube video, one could strive to geo-locate its fan base. The recent project to ascertain favorite brands of the some 4 million Hyves users in the Netherlands revealed the locality of the locale. Japanese brands were hardly present. Dutch and Western brands prevail. See graphics The question “Where is the user based?” could be answered by looking at the user’s ip-address, the registration of his/her homepage or the online profile in a social networking site. The question: “Where is the issue based?” requires a different approach. In scraping of various spheres (the news, the Web, the blogosphere), one may find out where the issue resonates most. This approach was applied in the Issue Animals projects, which reveals which animals that are endangered by climate change, are most often referred to (in both text and image) on the Web, in the news and in the blogosphere. Finding out where the network is, calls for co-link analysis of various actors around a certain topic. Projects Resources- Hine, Christine. Virtual Ethnography. London: Sage, 2004. - http://www.soc.surrey.ac.uk/staff/chine/

- Goldsmith, Jack & Tim Wu, Who Controls the Internet?: Illusions of a Borderless World. New York: Oxford University Press, 2006.

- Ghosh, Rishab Aiyer & Vipul Ved Prakash, The Orbiten Free Software Survey, in: First Monday, volume 5, number 7, July 2000, URL: http://firstmonday.org/issues/issue5_7/ghosh/index.html

- Marres, Noortje & Richard Rogers, To Trace or to Rub: Screening the Web Navigation Debate. Mediamatic, 1999. http://www.mediamatic.net/article-5726-en.html

- Miller, Daniel & Don Slater. The Internet: An Ethnographic Approach. New York: Berg Publishers, 2000.

- http://www.archiefschool.nl/docs/ehbw.pdf

- http://www.nrc.nl/binnenland/article757820.ece/Opkomst_en_ondergang_van_extreemrechtse_sites

- http://www.nrc.nl/binnenland/article759698.ece/Mabel_en_Friso_pasten_lemma_aan

- Geo in Code and Infrastructure

Hyperlink

In 1965, Ted Nelson proposed a file structure for "the complex, the changing and the indeterminate". The hyperlink was not only an elegant solution to the problem of complex organization, he argued, but would ultimately benefit creativity and promote a deeper understanding of the fluidity of human knowledge. High expectations have always accompanied the link. More recently, with the work of search engines, the link has been revealed as an indicator of reputation: researchers must now account for the reorganization of the link itself as a symbolic act with political and economic consequences. Projects Tools- Google Scraper

- Google Blogsearch Tool (using the scraping service Open Kapow)

- Technorati Scraper (using the scraping service Open Kapow)

- Link Ripper

- URL List Analyzer

- robots.txt stripper - Display a site's robot exclusion policy

- Tag cloud to svg tool

- boyd, danah. "The biases of links," apophenia. http://www.zephoria.org/thoughts/archives/2005/08/07/the_biases_of_links.html

- Bush, Vannevar. "As we may think," The Atlantic Monthly, Vol. 176, No. 1 (1945): 101-108.

- Elmer, Greg. "Hypertext on the Web: The Beginnings and Ends of Web Path-ology." Space and Culture, 10, 1-14

- Landow, George. Hyper/Text/Theory, Baltimore, MD: John Hopkins University (1994). http://cyberartsweb.org/cpace/ht/jhup/contents.html

- Nelson, Theodor. "Complex information processing: a file structure for the complex, the changing and the indeterminate". ACM/CSC-ER Proceedings of the 1965 20th national conference, New York: ACM Press (1965), 84-100.

- Nelson, Theodor. "Xanalogical structure, needed now more than ever: parallel documents, deep links to content, deep versioning, and deep re-use." ACM Computing Surveys (CSUR). Vol. 31, Issue 4 (December 1999) http://www.cs.brown.edu/memex/ACM_HypertextTestbed/papers/60.html

- Shirky, Clay. "Ontology is Overrated: Categories, Links, and Tags" Clay Shirky's Writings about the Internet. http://www.shirky.com/writings/ontology_overrated.html

Scrape

Scraping and Research

'Scraping' is often used to describe the extraction of web content for the purposes of 'syndicating' or feeding it. Scraping also is employed to describe content extraction more generally for further analysis. Many of the digital methods described in the digital methods initiative rely on scraping content, placing it into a database and counting. An important aspect of scraping is understanding the underlying structure of Web content, e.g., a Uniform Resource Locator or the name space (URL), the underlying object structure of HTML called Document Oriented Model (DOM) and the distribution and management of the Internet Protocol (IP). Once the structure is understood, a scraping procedure, for example, can extract the content on a site that is publicly available and aggregated in one space, but also single bits of content on the site that has not been reorganized. For example, in the scraping of the Dutch social networking site, hyves.nl, there is a list of favorite brands of the users. The list is neatly organized with brand names and values next to the names. Each brand has an id number. When rolling over the individual brands, one notes that many brand id numbers are not on the favorite brands list. All the missing brands, or least favorite ones, may be scraped and counted. The outcome of the analysis revealed a hyves online community far less devoted to the popular brands than to single brands or to "no logo." The project may be viewed here.

Scrapability and Scraper Maintenance

The initial question is whether to scrape at all. For example, engines and other aggregators often offer APIs (Application Programming Interfaces) that promise to serve the data you seek. One issue is that the results returned are limited per day or time frame as well as per quantity. Flickr for instance provides an API to retrieve machine tags (currenly a new development in the tagosphere) from its site but limits the output to only sixteen results. Thus many projects require the construction of a scraper, specifically developed to facilitate the needs for research in question. When scraping the web, specific decisions have to be made concerning what will be scraped and what will be left behind. Should the entire site or blog be scraped? If so, where does the site or blog begin and end? Scraping itself must also be scrutinized, as the issue is not only what to scrape but also how. By building one's own scraper, certain of those device related (API) issues can be overcome. For example, for the Flickr machine tag, one could decide to scrape a certain part of the site by using its DOM structure to get only the data from an exact location in the different pages. There are several options from which one could choose to build a scraper. PHP, XSLT/XQuery and Shell are some well known programming languages often used to build scrapers. There are also applications available on the web which enable non-programmers to be able to build their own scrapers. such as OpenKapow and Dapper. Because they focus on crossing data sets, the project outputs are sometimes called MashUps. See the project, WeScrape. Another issue concerns scraper maintenance. The web is dynamic, in a continual state of flux, and this poses important restrictions on the scrapability of the web. Webmasters and device makers update their sites, incorporate new technologies and coding languages, and restrict the content by legal use agreements.Scrapers - how to build them yourself

- Tutorial: How to create a scraper using Solvent and Piggybank Firefox plugins: ScraperTutorialForSolventAndPiggyBank

- Calishain, tara & Kevin Hemenway. Spidering Hacks. Sebastopol: O'Reilly & Associates (2004).

Tag

Tag and other user-generated metadata Tagging systems have become increasingly popular for user-generated categorization and recommendation on the web. “Many minds,” as collectives of space or device users are called, use tagging devices to mark resources such as images, movies and web pages (Flickr, Youtube, Del.icio.us). The tag is an example of a larger phenomenon in two contexts, markers and folksonomy. Markers are user-generated metadata that collectively inform the folksonomy. Folksonomy (folks making taxonomies) is a user generated index, the practice and method of collaborative categorization using freely chosen markers. Other markers besides tags are comments on blogs, edits on wikipedia, and anchor texts to mark web pages. Although tagging is a collective user-generated categorization and recommendation method, the devices that make these markers searchable impose different organization standards on marking practices and are mostly device specific (e.g. anchor texts in Google, tags in Technorati and Del.icio.us). Creative uses of these organization standards by users have however led to device changes (e.g. the for: tag in delicious). Marking practices are mostly device specific, meaning markers from one spherical device are not indexed and made searchable in other devices. Cross-device related tag analysis aims to measure how tagging practices in different spheres recommend the same information by comparing user-generated tags across spheres (PopTag project). The extent to which marking practices from one spherical device influence the recommendation of the other is researched in the case study link bombing/google bombing. Link bombs originated in the blogosphere with the intention to affect ranking in web device Google. Tools Projects- PopTag - Cross-device popular tags

- Marlow, Cameron, Mor Naaman, danah boyd, Marc Davis. "Position Paper, Tagging, Taxonomy, Flickr, Article, To Read." Collaborative Web Tagging Workshop (at WWW 2006). Edinburgh, Scotland, May 22, 2006. http://www.danah.org/papers/

- Tatum, Clifford. "Deconstructing Google bombs: A breach of symbolic power or just a goofy prank?" First Monday, vol. 10, no. 10, October 2005. http://www.firstmonday.org/issues/issue10_10/tatum/

Networked Content

ProjectsMapping Controversies

Projects| I | Attachment | Action | Size | Date | Who | Comment |

|---|---|---|---|---|---|---|

| |

wikihistoryeditmabelfriso.jpg | manage | 392 K | 31 Aug 2007 - 14:09 | SabineNiederer | wiki mabel friso edit |

| |

Govcom.org_Jubilee_-_a_set_on_Flickr.png | manage | 89 K | 22 Jan 2010 - 13:59 | AnneHelmond | GovCom jubilee |

| |

Digital_Methods_Initiative_-_a_set_on_Flickr.png | manage | 95 K | 22 Jan 2010 - 14:09 | AnneHelmond | |

| |

brandlink1.jpg | manage | 6 K | 29 Aug 2007 - 13:31 | UnknownUser | |

| |

brandlink2.jpg | manage | 4 K | 28 Aug 2007 - 23:09 | UnknownUser | |

| |

googlenav.jpg | manage | 11 K | 28 Aug 2007 - 23:10 | UnknownUser | |

| |

googleres.jpg | manage | 10 K | 28 Aug 2007 - 23:10 | UnknownUser |

Edit | Attach | Print version | History: r36 < r35 < r34 < r33 | Backlinks | View wiki text | Edit wiki text | More topic actions

Topic revision: r36 - 17 Dec 2015, UnknownUser

Copyright © by the contributing authors. All material on this collaboration platform is the property of the contributing authors.

Copyright © by the contributing authors. All material on this collaboration platform is the property of the contributing authors. Ideas, requests, problems regarding Foswiki? Send feedback